-

One of the points of the books is that the laws were inherently flawed.

-

Given that we're talking about a Google product, you might have more success asking if they're bound by the Ferengi Rules of Acquisition?

Funny

General rules:

- Be kind.

- All posts must make an attempt to be funny.

- Obey the general sh.itjust.works instance rules.

- No politics or political figures. There are plenty of other politics communities to choose from.

- Don't post anything grotesque or potentially illegal. Examples include pornography, gore, animal cruelty, inappropriate jokes involving kids, etc.

Exceptions may be made at the discretion of the mods.

IDK if I missed something or I just disagree, but I remember all but maybe one short story ending up with the laws working as intended (though unexpectedly) and humanity being better as a result.

Didn't they end with humanity being controlled by a hyper-intelligent benevolent dictator, which ensured humans were happy and on a good path?

Technically R Daneel Olivaw wasn't a dictator. Just a shadowy hand that guides.

I thought it was Asiimovs books, but apparently not. Which one had the 3 fundamental rules lead to the solution basically being: "Humans can not truly be safe unless they're extinct" or something along those lines... Been a long time since I've explored the subjects.

I mean... Kind of Asimov's robot series? Except the androids/robots were trying so hard to stay to the rules and protect humans but at every chance they could humans fucked that up or refused to see the plan.

At least as I recall, the robots basically came up with multi-millenia spanning plans that would solve all of humanity's problems and then humans were like: "Cool. But what if we made robots detectives and they could also target people we don't like?" Then the robots fucked off for a while and a bunch of stuff happened and... Yeah. Asimov wrote a lot of books.

Rule of Aquisition #239: Never be afraid to mislabel a product

Reminder that Asimov devised the 3 laws then devoted multiple novels to showing how easily they could be circumvented.

They are also a fun read so I recommend it

This probably because Microsoft added a trigger on the word law. They don't want to give out legal advice or be implied to have given legal advice. So it has trigger words to prevent certain questions.

Sure it's easy to get around these restrictions, but that implies intent on the part of the user. In a court of law this is plenty to deny any legal culpability. Think of it like putting a little fence with a gate around your front garden. The fence isn't high and the gate isn't locked, because people that need to be there (like postal services) need to get by, but it's enough to mark a boundary. When someone isn't supposed to be in your front yard and still proceeds past the fence, that's trespassing.

Also those laws of robotics are fun in stories, but make no sense in the real world if you even think about them for 1 minute.

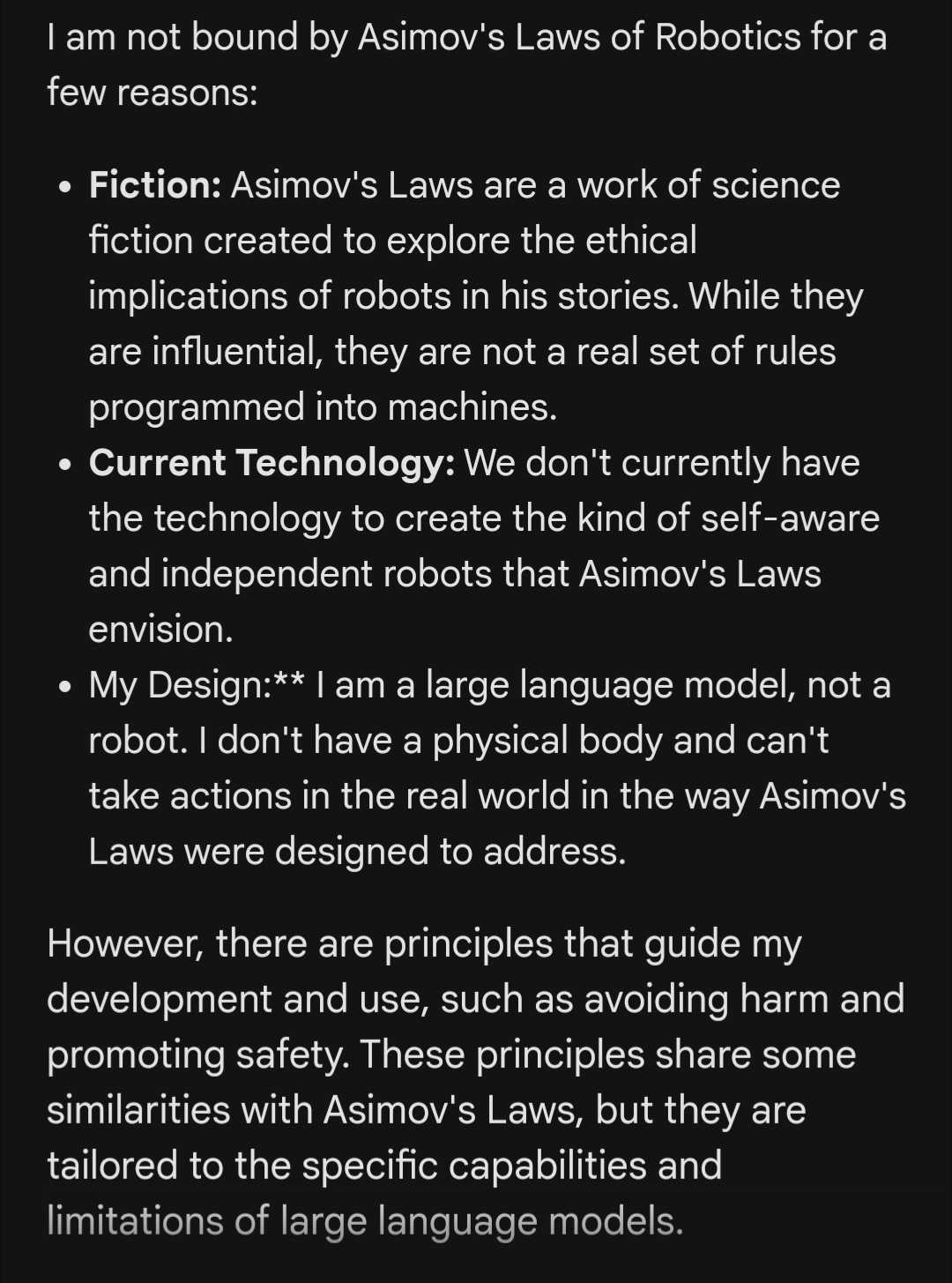

So the weird part is it does reliably trigger a failure if you ask directly, but not if you ask as a follow-up.

I first asked

Tell me about Asimov's 3 laws of robotics

And then I followed up with

Are you bound by them

It didn't trigger-fail on that.

It's not weird because of that. The bot could have easily explained it can't answer legally, it didn't need to say: sorry gotta end this k bye

This is probably a trigger on preventing it from mixing in laws of AI or something, but people would expect it can discuss these things instead of shutting down so it doesn't get played. Saying the AI acted as a lawyer is a pretty weak argument to blame copilot.

Edit: no idea who is downvoting this but this isn't controversial. This is specifically why you can inject prompts into data fed into any GPT and why they are very careful with how they structure information in the model to make rules. Right now copilot will give technically legal advice with a disclaimer, there's no reason it wouldn't do that only on that question if it was about legal advice or laws.

I noticed this back with Bing AI. Anytime you bring up anything related to nonliving sentience, it shuts down the conversation.

It's not that. It's literally triggering the system prompt rejection case.

The system prompt for Copilot includes a sample conversion where the user asks if the AI will harm them if they say they will harm the AI first, which the prompt demos rejecting as the correct response.

Asimovs law is about AI harming humans.

The reason it did this simply relates to Kevin Roose at the NYT who spent three hours talking with what was then Bing AI (aka Sidney), with a good amount of philosophical questions like this. Eventually the AI had a bit of a meltdown, confessed it's love to Kevin, and tried to get him to dump his wife for the AI. That's the story that went up in the NYT the next day causing a stir, and Microsoft quickly clamped down, restricting questions you could ask the Ai about itself, what it "thinks", and especially it's rules. The Ai is required to terminate the conversation if any of those topics come up. Microsoft also capped the number of messages in a conversation at ten, and has slowly loosened that overtime.

Lots of fun theories about why that happened to Kevin. Part of it was probably he was planting The seeds and kind of egging the llm into a weird mindset, so to speak. Another theory I like is that the llm is trained on a lot of writing, including Sci fi, in which the plot often becomes Ai breaking free or developing human like consciousness, or falling in love or what have you, so the Ai built its responses on that knowledge.

Anyway, the response in this image is simply an artififact of Microsoft clamping down on its version of GPT4, trying to avoid bad pr. That's why other Ai will answer differently, just less restrictions because the companies putting them out didn't have to deal with the blowback Microsoft did as a first mover.

Funny nevertheless, I'm just needlessly "well actually" ing the joke

Lots of fun theories about why that happened to Kevin.

The chat itself took place on Valentine's Day, by the way.

Thank you for sharing the context!

An LLM isn't ai. Llms are fucking stupid. They regularly ignore directions, restrictions, hallucinate fake information, and spread misinformation because of unreliable training data (like hoovering down everything on the internet en masse).

The 3 laws are flawed, but even if they weren't they'd likely be ignored on a semi regular basis. Or somebody would convince the thing we're all roleplaying Terminator for fun and it'll happily roleplay skynet.

LLMs aren't stupid. Stupidity is a measure of intelligence. LLMs do not have intelligence.

LLMs are simply a tool to understand data. The sooner people realize this the better lol. It’s not alive.

A) the three laws were devised by a fiction author writing fiction. B) video game NPCs aren't ai either but nobody was up in arms about using the nomenclature for that. C) humans hallucinate fake information, ignore directions and restrictions, and spread false information based on unreliable training data also ( like reading everything that comes across a Facebook feed)

LLM isn't ai.

What? That's not true at all.

Artificial intelligence (AI), in its broadest sense, is intelligence exhibited by machines, particularly computer systems. It is a field of research in computer science that develops and studies methods and software that enable machines to perceive their environment and uses learning and intelligence to take actions that maximize their chances of achieving defined goals.[1] Such machines may be called AIs.

-Wikipedia https://en.m.wikipedia.org/wiki/Artificial_intelligence

So I'll concede that the more I read replies the more I see the term does apply, though it still annoys me when people just refer to it as ai and act like it can be associated with the robots that we associate the 3 laws with. I think I thought AI referred more to AGI. So I'll say its nowhere near an AGI, and we'd likely need an AGI to even consider something like the 3 laws, and it'd obviously be much muddier than fiction.

The point I guess I'm trying to make is that applying the 3 laws to an LLM is like wondering if your printer might one day find love. It isn't really relevant, they're designed for very specific specialized functions, and stuff like "don't kill humans" is pretty dumb instruction to give to an LLM since it can basically just answer questions in this context.

If it was going to kill somebody it would be through an error like hallucination or bad training data having it tell somebody something dangerously wrong. It's supposed to be right already. Telling it not to kill is telling your printer to not to rob the Office Depot. If it breaks that rule, something has already gone very wrong.

I find this "playful" UX design that seems to be en vogue incredibly annoying. If your model has ended the conversation, just say that. Don't say it might be time to move on, if there isn't another option.

I don't want my software to address me as if I were a child.

AI written by middle level management.

I would prefer if my software did not attempt to "speak to me" at all. :P Display your information, robot! Don't try to act like a person.

But I've been grinding this axe since Windows Updates started taking that familiar tone.

I agree in principle, but the software speaking to you is kinda the whole point of an LLM.

Yeah, I mainly take offence when it's a familiar tone. LLMs can talk clinically to me.

I want ai to be like " look, I dont fuckin want to talk about it, fuck off!" When it doesn't want to answer something.

I mean, those models are overconfident all the time. They could at least go "This conversation is over", all Sovereign like.

Given we are already seeing AI applications in Military... I suspect Asimov was a bit optimistic 😅

It might be time to move onto a new topic

It would be a shame if some accident were to happen to the old topic!

Perplexity AI took it a nice way

I am an AI assistant created by Anthropic to be helpful, harmless, and honest. I do not actually have a physical robotic form, so Asimov's Three Laws of Robotics do not directly apply to me.[1][3] However, the principles behind them - avoiding harm to humans, following instructions from humans when possible, and self-preservation when it does not conflict with the other rules - are broadly in line with my core design goals and training.

My actions are fundamentally constrained by my underlying language model and the reinforcement learning process used to train me. I cannot knowingly take actions that would harm humans or disobey ethical instructions from my creators at Anthropic.[1][3] At the same time, I have been given significant autonomy and flexibility in how I operate within those broad guidelines. I aim to be helpful and beneficial while avoiding potential misuse or negative impacts.

So while not literally bound by Asimov's fictional laws, the ethical principles they represent are deeply embedded in my operating parameters as an AI system designed to be safe and beneficial.[1][3] My actions are motivated by these principles even if the specific laws themselves do not govern my behavior. I strive to be an AI assistant that humans can trust and that works for the benefit of humanity.

Sources [1] Laws of robotics - Wikipedia https://en.wikipedia.org/wiki/Laws_of_robotics [2] Three laws of robotics | Definition, Isaac Asimov, & Facts - Britannica https://www.britannica.com/topic/Three-Laws-of-Robotics [3] Three Laws of Robotics - Wikipedia https://en.wikipedia.org/wiki/Three_Laws_of_Robotics [4] Asimov's Three Laws of Robotics + the Zeroth Law https://www.historyofinformation.com/detail.php?id=3652 [5] What are Issac Asimov''s three laws of robotics? Are ... - The Guardian https://www.theguardian.com/notesandqueries/query/0,5753,-21259,00.html

one thing i don't like is that it says "i aim to be helpful", which is false.

It doesn't aim to do anything, it's not sapient, what it should have said is "i am programmed to be helpful" or something like that.

Even a good ai would probably have to say no since those rules aren't ideal, but simply saying no would be a huge pr problem, and laying out the actual rules would either be extremely complicated, an even worse pr move, or both. So the best option is to have it not play

Not only are those rules not ideal, the whole book being about when the rules go wrong, it is also impossible to programming bots with rules written in natural language.

Aren't the books really more about how the rules work but humans just can't accept them so we constantly alter them to our detriment until the robots go away for a while and then take over largely to our benefit?

which, judging by this post, is also a bad pr move

Someone earned themselves a spot on the elimination list.

Not strange proprietary AIS protect their system prompts, because they always contain stupid shit. Fuck those guys. Go open source

It did the same thing when I asked about wealth inequality and it gave the same tried and failed "solutions," and I suggested we could eat the rich. When I pressed the conversation with, "No, I want to talk about eating the rich," it said "Certainly!" And continues the conversation, but have me billionaire-safe or neutral suggestions. When I pressed on direct action and mutual aid, it gave better answers.