Azire looks great ! I'm interested on why you choose azire over protonVPN?

N0x0n

I have a self-hosted Baikal server with self-signed CA on Android 14 and it works.

However, I didn't had to add the certificate to Davx⁵ itself. Adding a rootCA into your device and your reverse proxy handling the request should work as expected over https.

Those kind of things are difficult to troubleshoot, this could be:

- Bad rootCA certificate, missing the necessary options ?

- Wrong certificate handled by your reverse proxy ?

- Radicale doesn't recognize your certificate extension ?

- Wrong networking configuration ?

- Bug ?

- ....

We need more infos about your setup:

- Do you use a reverse proxy ?

- Had you already any success with this certificate within an other application ?

- Any logs from your Android, Davx⁵?

Yeaaah I already played a bit arround with step-ca ! Right now a make a mini-CA with openssl.

When I get more comfortable with how everything works together I will surely give step-ca another try.

Maybe all package managers default to libtorrent 2.0.X, but that's not true when downloading from the website.

Maybe you are a windows user?

Close enough... Got MacOS, Windows and EndeavourOS and there's also an appimage available on their site so it's not only because you're a "Windows user".

Can't argue against that.

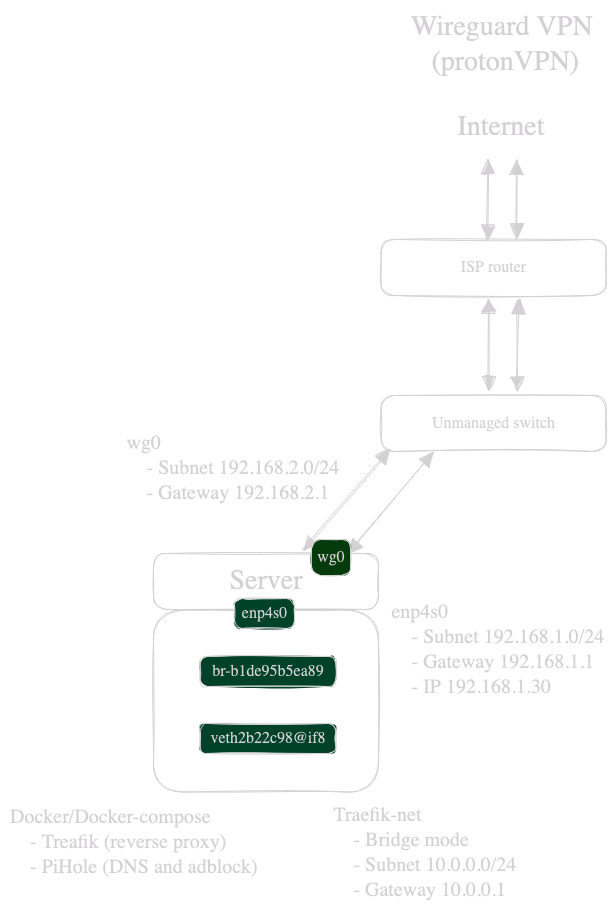

However, I prefer local domain names accessible via Wireguard with self-signed certs. I like to understand how everything works under the hood !

Also, I'm broke AF and buying a domain name (even cheap ones) are out of my budget :(.

Okay thank you :). We will see after a few years I guess?

It doesn't look like an "emergency alarm" to switch over to another database. However, I was already thinking of switching every container to postgres. Maybe that's the push needed.

Hummm... Can someone tell me if this is good news or bad news?

Generally a buy-out is mostly bad news, but I can't tell here in this specific case.

Except for the learning process and if you want your self-signed local domains in your lan !

https://jellyfin.homelab.domain is easier to access than IP addresses.

Wise words ! The best option would be to add AI by default but let's people to totally disable it either via about:config or an uncheck box in the options.

Let's be real, only tech savy people mess around with about:config nobs so this wouldn't bother casual users an give others the possibility to disable it.

If only it would be that easy... First hurdle there is no "enable i2p on qbittorrent" ! After searxing around and coming across the last comment of a reddit post (not even the official forum), you have to download qbitorrent with libtorrent 2.0.x series.

On the qbittorent download page chose the qbitorrent version corresponding to your system with lt20.

Don't make it sound I2P alongside qbitorrent is easy. It's not ! There isn't any proper tutorial on how to use i2p and eepsites in the first place. Don't get me wrong, I'm doing my part, but for newbies/newcomers this sounds like an "install/forget" situation while it isn't !

Try something like this for 1 show:

Show_name [ID]/

Show_name [ID]/Season 01

Show_name [ID]/Season 01/S01E01 Episode name.mkv

Clear all the log task in jellyfin Task menu:

Dashboard>Scheduled Task>Maintenance

Optimize Database

Clear Log Folder

Clear Cache Folder

Clear Activity Logs

Clear Transcodes Folder

Clear all your Browsers cache/history/data

This depends on what browser you use

Do a full rescan of your Jellyfin show

Dashboard>Libraries>Scan All Libraries

Replace all metadata and check to replace existing images

Jellyfin main menu (where you see your shows thumbnail) > "three dots" > refresh metadata > replace all metadata > check replace existing images

If this works for the TV show you changed according to Jellyfin's recommendations, you can bulk edit your TV shows names and folders with Sonarr. You don't need to redownload them, just use your local files.

If this doesn't work check your Jellyfin's docker logs/configuration/metadata downloader

Hope it helps !

Edit: here's an example on how to edit naming scheme with sonarr: https://trash-guides.info/Sonarr/Sonarr-recommended-naming-scheme/

I just recently learned about azireVPN.

Any thoughts?