Working Setup files, for my ARM64 Ubuntu host server. The postgres, lemmy, lemmy-ui, and pictrs containers all are on the lemmyinternal network only. The nginx:1-alpine container is in both networks. docker-compose.yml

spoiler

version: "3.3"

# JatNote = Note from Jattatak for working YML at this time (Jun8,2023)

networks:

# communication to web and clients

lemmyexternalproxy:

# communication between lemmy services

lemmyinternal:

driver: bridge

#JatNote: The Internal mode for this network is in the official doc, but is what broke my setup

# I left it out to fix it. I advise the same.

# internal: true

services:

proxy:

image: nginx:1-alpine

networks:

- lemmyinternal

- lemmyexternalproxy

ports:

# only ports facing any connection from outside

# JatNote: Ports mapped to nonsense to prevent colision with NGINX Proxy Manager

- 680:80

- 6443:443

volumes:

- ./nginx.conf:/etc/nginx/nginx.conf:ro

# setup your certbot and letsencrypt config

- ./certbot:/var/www/certbot

- ./letsencrypt:/etc/letsencrypt/live

restart: always

depends_on:

- pictrs

- lemmy-ui

lemmy:

#JatNote: I am running on an ARM Ubuntu Virtual Server. Therefore, this is my image. I suggest using matching lemmy/lemmy-ui versions.

image: dessalines/lemmy:0.17.3-linux-arm64

hostname: lemmy

networks:

- lemmyinternal

restart: always

environment:

- RUST_LOG="warn,lemmy_server=info,lemmy_api=info,lemmy_api_common=info,lemmy_api_crud=info,lemmy_apub=info,lemmy_db_schema=info,lemmy_db_views=info,lemmy_db_views_actor=info,lemmy_db_views_moderator=info,lemmy_routes=info,lemmy_utils=info,lemmy_websocket=info"

volumes:

- ./lemmy.hjson:/config/config.hjson

depends_on:

- postgres

- pictrs

lemmy-ui:

#JatNote: Again, ARM based image

image: dessalines/lemmy-ui:0.17.3-linux-arm64

hostname: lemmy-ui

networks:

- lemmyinternal

environment:

# this needs to match the hostname defined in the lemmy service

- LEMMY_UI_LEMMY_INTERNAL_HOST=lemmy:8536

# set the outside hostname here

- LEMMY_UI_LEMMY_EXTERNAL_HOST=lemmy.bulwarkob.com:1236

- LEMMY_HTTPS=true

depends_on:

- lemmy

restart: always

pictrs:

image: asonix/pictrs

# this needs to match the pictrs url in lemmy.hjson

hostname: pictrs

networks:

- lemmyinternal

environment:

- PICTRS__API_KEY=API_KEY

user: 991:991

volumes:

- ./volumes/pictrs:/mnt

restart: always

postgres:

image: postgres:15-alpine

# this needs to match the database host in lemmy.hson

hostname: postgres

networks:

- lemmyinternal

environment:

- POSTGRES_USER=AUser

- POSTGRES_PASSWORD=APassword

- POSTGRES_DB=lemmy

volumes:

- ./volumes/postgres:/var/lib/postgresql/data

restart: always

lemmy.hjson:

spoiler

{

# for more info about the config, check out the documentation

# https://join-lemmy.org/docs/en/administration/configuration.html

# only few config options are covered in this example config

setup: {

# username for the admin user

admin_username: "AUser"

# password for the admin user

admin_password: "APassword"

# name of the site (can be changed later)

site_name: "Bulwark of Boredom"

}

opentelemetry_url: "http://otel:4317"

# the domain name of your instance (eg "lemmy.ml")

hostname: "lemmy.bulwarkob.com"

# address where lemmy should listen for incoming requests

bind: "0.0.0.0"

# port where lemmy should listen for incoming requests

port: 8536

# Whether the site is available over TLS. Needs to be true for federation to work.

# JatNote: I was advised that this is not necessary. It does work without it.

# tls_enabled: true

# pictrs host

pictrs: {

url: "http://pictrs:8080/"

# api_key: "API_KEY"

}

# settings related to the postgresql database

database: {

# name of the postgres database for lemmy

database: "lemmy"

# username to connect to postgres

user: "aUser"

# password to connect to postgres

password: "aPassword"

# host where postgres is running

host: "postgres"

# port where postgres can be accessed

port: 5432

# maximum number of active sql connections

pool_size: 5

}

}

The following nginx.conf is for the internal proxy, which is included in the docker-compose.yml This is entirely separate from Nginx-Proxy-Manager (NPM)

nginx.conf:

spoiler

worker_processes 1;

events {

worker_connections 1024;

}

http {

upstream lemmy {

# this needs to map to the lemmy (server) docker service hostname

server "lemmy:8536";

}

upstream lemmy-ui {

# this needs to map to the lemmy-ui docker service hostname

server "lemmy-ui:1234";

}

server {

# this is the port inside docker, not the public one yet

listen 80;

# change if needed, this is facing the public web

server_name localhost;

server_tokens off;

gzip on;

gzip_types text/css application/javascript image/svg+xml;

gzip_vary on;

# Upload limit, relevant for pictrs

client_max_body_size 20M;

add_header X-Frame-Options SAMEORIGIN;

add_header X-Content-Type-Options nosniff;

add_header X-XSS-Protection "1; mode=block";

# frontend general requests

location / {

# distinguish between ui requests and backend

# don't change lemmy-ui or lemmy here, they refer to the upstream definitions on top

set $proxpass "http://lemmy-ui";

if ($http_accept = "application/activity+json") {

set $proxpass "http://lemmy";

}

if ($http_accept = "application/ld+json; profile=\"https://www.w3.org/ns/activitystreams\"") {

set $proxpass "http://lemmy";

}

if ($request_method = POST) {

set $proxpass "http://lemmy";

}

proxy_pass $proxpass;

rewrite ^(.+)/+$ $1 permanent;

# Send actual client IP upstream

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header Host $host;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

}

# backend

location ~ ^/(api|pictrs|feeds|nodeinfo|.well-known) {

proxy_pass "http://lemmy";

# proxy common stuff

proxy_http_version 1.1;

proxy_set_header Upgrade $http_upgrade;

proxy_set_header Connection "upgrade";

# Send actual client IP upstream

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header Host $host;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

}

}

}

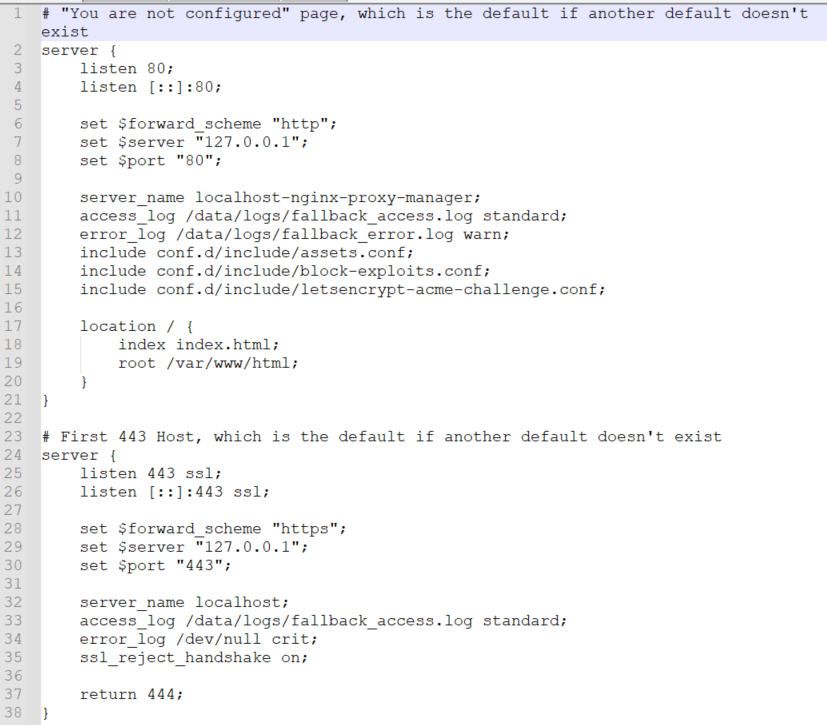

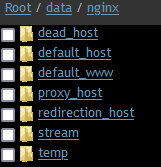

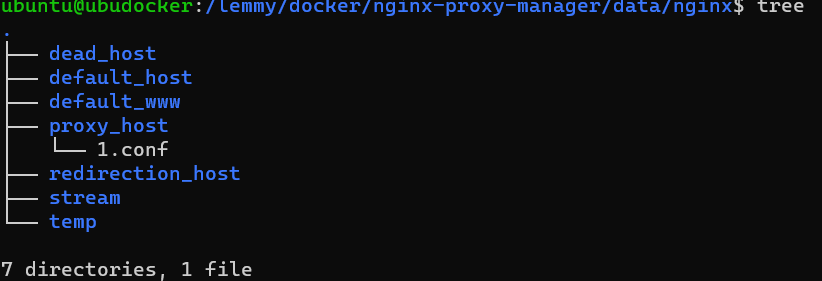

The nginx-proxy-manager container only needs to be in the same container network as the internal nginx:1-alpine container from the stack.

You need to create a proxy host for http port 80 to the IP address of the internal nginx:1-alpine container on the lemmyexternalproxy network in docker. Include the websockets support option.

https://lemmy.bulwarkob.com/pictrs/image/55870601-fb24-4346-8a42-bb14bb90d9e8.png

Then, you can use the SSL tab to do your cert and such. NPM is free to work on other networks with other containers as well, as far as I know.