this post was submitted on 28 Jan 2025

1103 points (97.6% liked)

Microblog Memes

7603 readers

2288 users here now

A place to share screenshots of Microblog posts, whether from Mastodon, tumblr, ~~Twitter~~ X, KBin, Threads or elsewhere.

Created as an evolution of White People Twitter and other tweet-capture subreddits.

Rules:

- Please put at least one word relevant to the post in the post title.

- Be nice.

- No advertising, brand promotion or guerilla marketing.

- Posters are encouraged to link to the toot or tweet etc in the description of posts.

Related communities:

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

Whyd you use a Chinese website instead of just running the model, which does not output that for that question?

I tried the Qwen-14B distilled R1 version on my local machine and it's clear they have a bias to favor anything CPP related.

That said, all models have biases/safeguards, so IMO it just depends on your application whether you trust it or not, there's no model to rule them all in every single aspect.

The actual local model for R1 (the 671b one) does give that output because some of the censorship is baked into the training data. You're probably referring to the smaller parameter models which don't have that censorship--because those models are distilled versions of R1 based on llama and qwen (the 1.5b, 7b, 8b, 14b, 32b, and 70b versions)

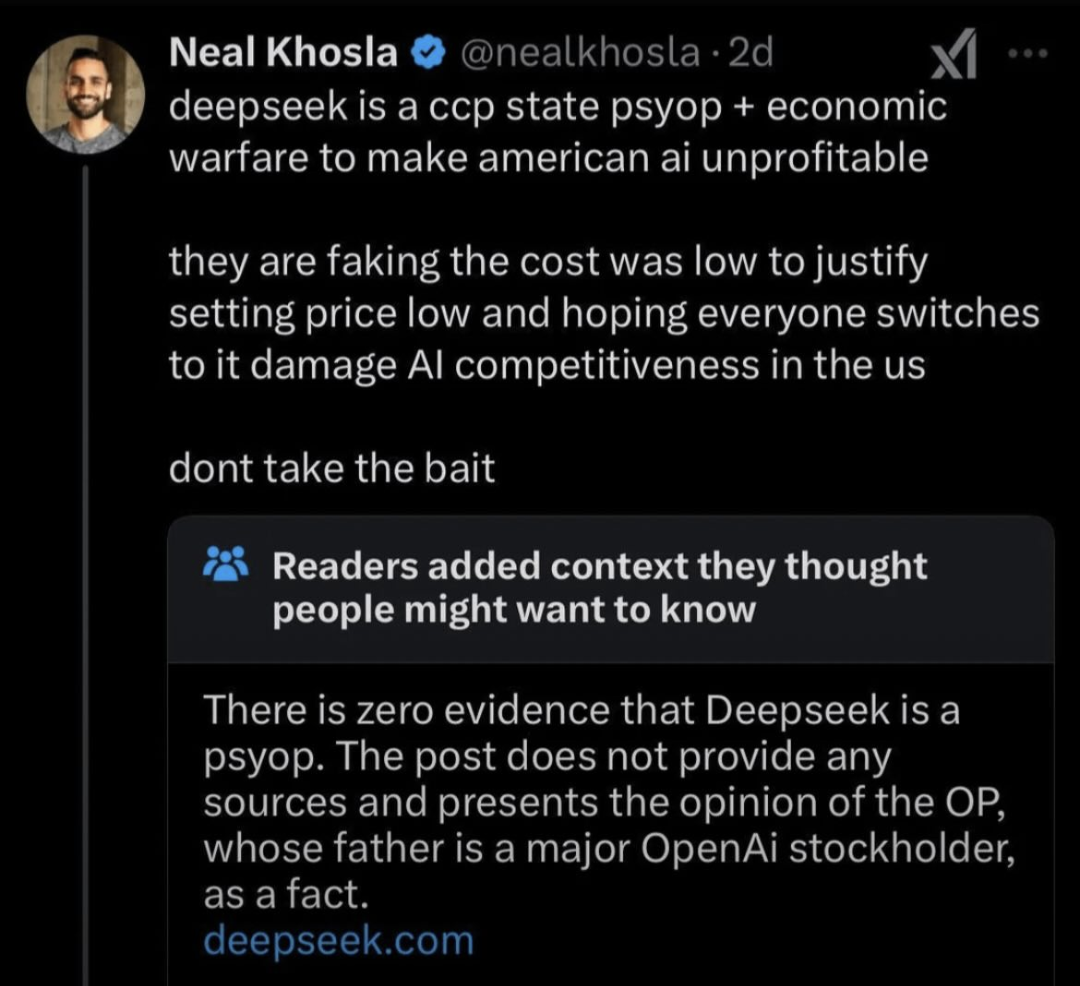

You can see a more in-depth discussion of that here: trigger warning: neoliberal techbros

I was running the model locally. It's hardcoded in bro. It still outputs lies.