this post was submitted on 21 Sep 2024

54 points (80.0% liked)

Asklemmy

47686 readers

993 users here now

A loosely moderated place to ask open-ended questions

If your post meets the following criteria, it's welcome here!

- Open-ended question

- Not offensive: at this point, we do not have the bandwidth to moderate overtly political discussions. Assume best intent and be excellent to each other.

- Not regarding using or support for Lemmy: context, see the list of support communities and tools for finding communities below

- Not ad nauseam inducing: please make sure it is a question that would be new to most members

- An actual topic of discussion

Looking for support?

Looking for a community?

- Lemmyverse: community search

- sub.rehab: maps old subreddits to fediverse options, marks official as such

- [email protected]: a community for finding communities

~Icon~ ~by~ ~@Double_[email protected]~

founded 6 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

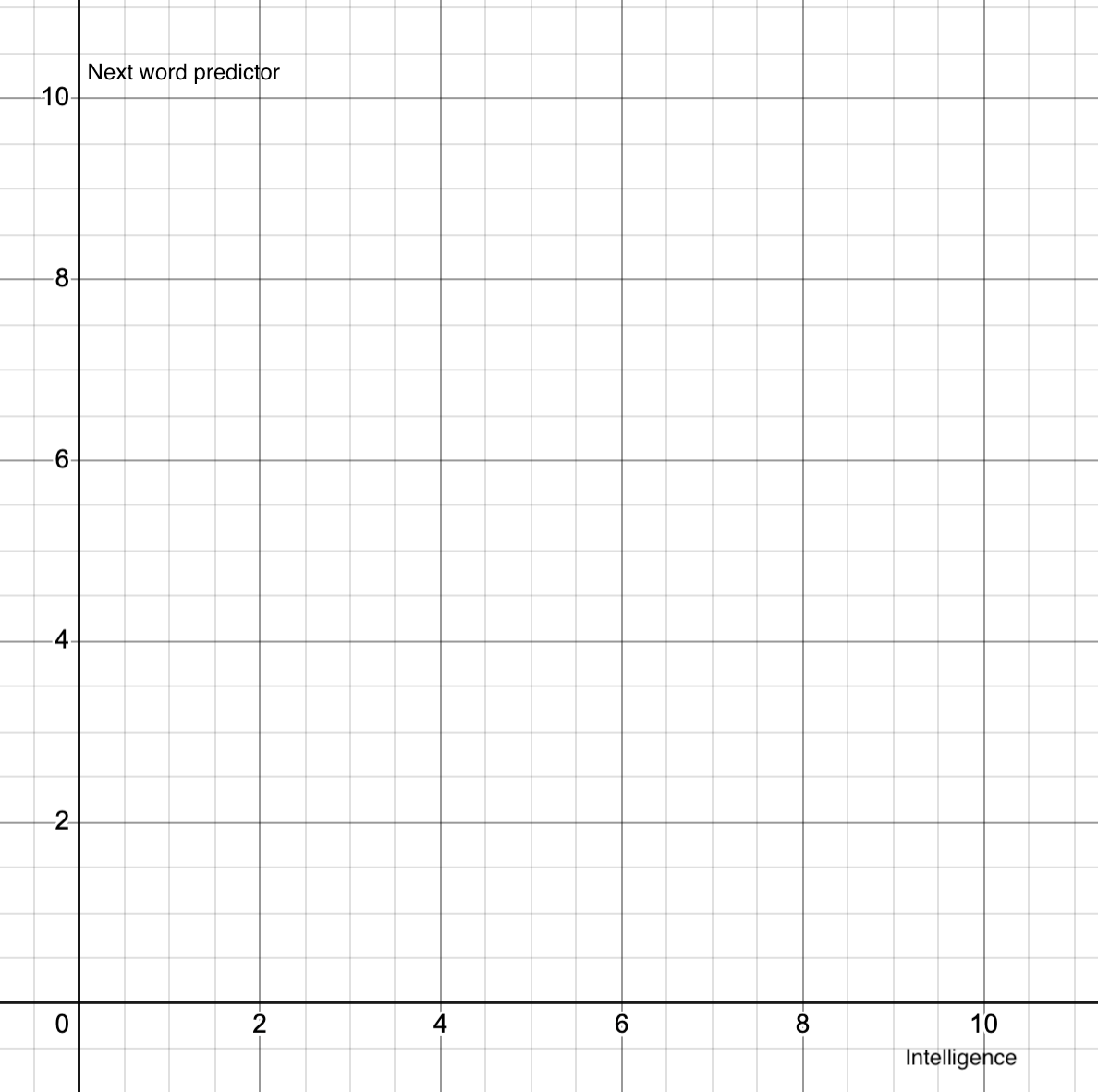

Hell no. Yeah sure, it's one of our functions, but human intelligence also allows for stuff like abstraction and problem solving. There are things that you can do in your head without using words.

I mean, I know that about my mind. Not anybody else's.

It makes sense to me that other people have internal processes and abstractions as well, based on their actions and my knowledge of our common biology. Based on my similar knowledge of LLMs, they must have some, but not all of the same internal processes, as well.