this post was submitted on 21 Sep 2024

54 points (80.0% liked)

Asklemmy

47659 readers

863 users here now

A loosely moderated place to ask open-ended questions

If your post meets the following criteria, it's welcome here!

- Open-ended question

- Not offensive: at this point, we do not have the bandwidth to moderate overtly political discussions. Assume best intent and be excellent to each other.

- Not regarding using or support for Lemmy: context, see the list of support communities and tools for finding communities below

- Not ad nauseam inducing: please make sure it is a question that would be new to most members

- An actual topic of discussion

Looking for support?

Looking for a community?

- Lemmyverse: community search

- sub.rehab: maps old subreddits to fediverse options, marks official as such

- [email protected]: a community for finding communities

~Icon~ ~by~ ~@Double_[email protected]~

founded 6 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

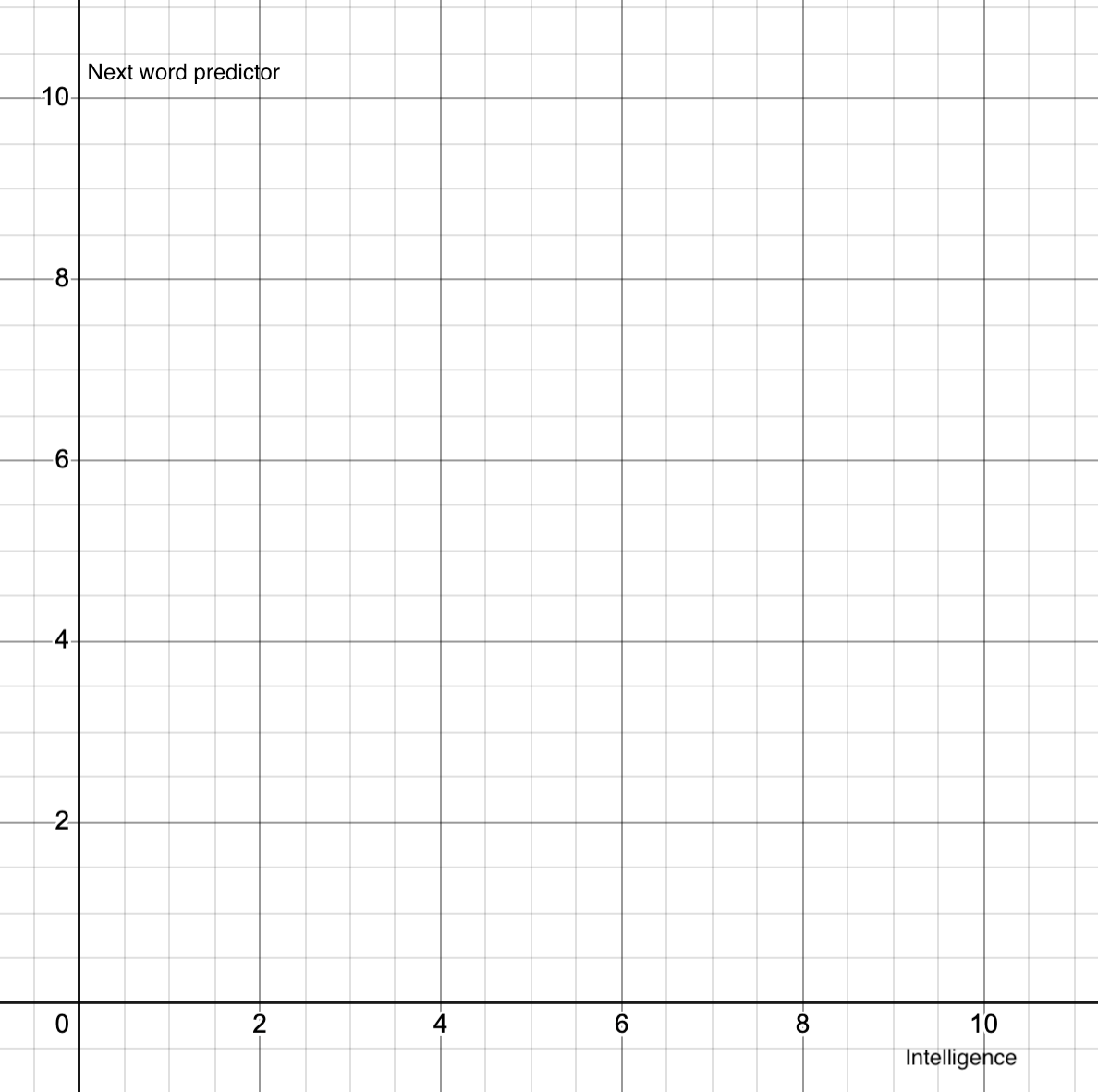

Imo, which is backed a bit by some pretty new studies, not only do LLMs not have intelligence at all, they are incapable of it.

Human intelligence and conciousness likely has a lot to do with nanotubes that trigger quantum wave function collapse, and allow for decision making. Computers simply do not function in this way. Computers are processing machines. They have logic gates with 2 states. 101101110011 binary logic.

If new studies related to nanotubes are right biological brains are simply operating on an entirely diffetent level and playing by a different set of rules than computers. Its not a issue of getting the software right, or getting more processing power. Its an issue of physical capability of the machine to perform certain functions.