MoreWrite

post bits of your writing and links to stuff you’ve written here for constructive criticism.

if you post anything here try to specify what kind of feedback you would like. For example, are you looking for a critique of your assertions, creative feedback, or an unbiased editorial review?

if OP specifies what kind of feedback they'd like, please respect it. If they don't specify, don't take it as an invite to debate the semantics of what they are writing about. Honest feedback isn’t required to be nice, but don’t be an asshole.

I wrote this and I want to know what else someone would want to know, if I'm dead wrong on any of it, etc.

the channel: https://www.youtube.com/@pivottoai

How I do the Pivot to AI YouTube videos

Cat: site news

Img: a title card, or me looking horrified at s/t

The Pivot to AI video series is getting views and subscribers, which is nice!

It’s a couple of hours to write a post and expand it into a script, fifteen minutes to record if nothing breaks or falls over, and another hour or two to clean up the audio and assemble the video, including screenshots of sources.

The philosophy is: your content is what matters, everything else is a bonus. Put in effort, not money. We’re making punk rock here. I did fanzines in the ’80s and books in the 2010s on the same principles.

Fortunately, cheap consumer electronics is good enough in 2025. Here’s how I do videos on a budget of zero.

-- read more --

Camera: I use my phone, which is the best camera in the house. It does 1080p on the front-facing camera. This records H.264 video and 96kpbs AAC sound, which is fine for voice.

I try to do everything in a single take. Fancy is my enemy. “We’ll fix it in post” is a film-maker phrase meaning “well, that was a cock-up.” Everything you fix in post takes ten to twenty times longer than getting it right the first time.

Camera mount: anything that will mount a phone stably. The loved one and kid got me a ring light for Christmas and told me to rant on TikTok when something annoyed me. Unfortunately, ring lights are incompatible with wearing glasses - you get virtual googly eyes projected perfectly onto your lenses - but the phone holder bit still works well mounted on the desk. I also have an older phone mount for a camera tripod.

Microphone: Your video can be iffy, but your sound has to be good.

I use my Jabra Evolve 40 headset, which was designed for work Zoom calls. This is not great, but it’ll do. I turn the bass up in post-production and it sounds better.

I really want a Røde - the loved one has a Røde M3, which is all the mic you need for a remarkable range of use cases, for around £80. The M3 really needs phantom power - if you use a battery, you will forget it’s switched on and it’ll go flat - which is another £20 box. But Røde make an enormous variety of good podcasting mics of professional quality and you’re unlikely to go wrong. A Røde is the next piece of kit I’ll spend money on.

Lighting: A 9W daylight bulb above, a 9W daylight bulb to my left, and a 9W yellow bulb (“warm white”) to my right are doing the job so far. The lights to my left and right are in clip-on gooseneck mounts attached to the shelves.

Teleprompter: Elegant Teleprompter on Android. This puts a floating window over your camera app. It’s free with unobtrusive ads and the developer is very in-touch with his user base and likes to fix problems.

Sound: I edit the sound in Audacity, which is free, open source, and just works. If you know what you want to do, you can probably do it. I normalise the very quiet phone audio, do a noise reduction, pump up the bass 4.5dB, then go through the audio de-umming and removing breath noise, the latter being the main reason I want a microphone that isn’t up my nose. Then compression, then it’s ready.

Screen shots: I take these in Firefox and edit them in GIMP. I also make the title cards in GIMP. Just make things 1280×720.

Video editing: I use OpenShot, which is a bit open-source, but it basically works and it’s free. I get the raw video, the cleaned audio, the various still images, and the theme music, and assemble the final video. Export at 720p as “MP4 (H.264 va).” I could go to 1080p, but this is a talking head show and you don’t need my nose hairs that sharp.

ffmpeg: this is the Swiss army knife of video and about as fiddly to use. I’m very into tweaking things in ffmpeg.

OpenShot had trouble with the Nature video, which kept freezing in rendering the 7-minute raw file late in the video. I ran the raw video through ffmpeg to add a key frame every ten frames, which is probably overkill, but it worked, so I’ve kept doing it. I do not recommend you do this unless you specifically have this problem. But, to generate an oversized video with no sound (because the cleaned-up audio track is separate) and a ton of extra key frames:

ffmpeg -i input.mp4 -vcodec libx264 -x264-params keyint=10:scenecut=0 -crf 14 -an output.mp4

I can also do things like stretch a clip to 1.2× length to make speech clearer:

ffmpeg -i fatima.mp4 -vf "setpts=1.2*PTS" -af "atempo=0.8333" fatima2.mp4

When you finally give in to the urge for fancy with equipment, pro podcaster kit is super cheap on Alibaba and hence Amazon. Go through surplus listings and see if you’re lucky.

It's been a good minute since I took a shot at predicting how the AI bubble (and its burst) is gonna play out. This is less a dedicated post and more a compilation of the bajillion thoughts I've had about this.

- Throwing the Humanities a Bone

Right off the bat, I suspect the arts/humanities will gain a degree of begrudging respect at the direct expense of tech's public image taking a nosedive. My primary reason for this is due to the slop-nami and its wide-spread impact on the Internet at large.

On one end, you have an utter tidal wave of AI-generated slop flooding basically every corner of daily life, giving us a nonstop torrent of misinformation (political or otherwise) and inhumanly shit "artwork" which clogs the Internet with garbage and drowns out human voices wherever it can, acting as an omnipresent annoyance at best and a direct threat to people's livelihoods at worst.

On the other end, you have the AI bros responsible for/complicit in this slop-nami, who uncritically praise whatever garbage gen-AI creates, relentlessly hype up its "creative" qualities and relentlessly doomsay about the incoming Artificial General Intelligence that will wipe out humanity/solve all of humanity's problems/God-Knows-Fucking-What.

Combined, these two aspects of the bubble paint a picture of tech as an utterly insipid and artless field, full of soulless dilletantes who are incapable of making or understanding art at best and actively hostile to it at worst.

If that new image of tech takes root in the public consciousness, its gonna make the arts/humanities look a fair bit better by comparison. Sure, the fields are still gonna have to deal with the stigma of being a "useless degree" but its better than your degree being taken as an indictment of you as a person.

Confidence: Low. This is pure gut instinct (and probably hope) talking from watching the slop-nami first-hand - whilst it could force people to appreciate the arts a bit more, nothing's stopping the slop-nami from devaluing the arts even fucking more.

- Smells Like Fash Spirit

On another front entirely, I expect this bubble will leave the tech industry with a reputation as a Nazi bar - a reputation that will linger even after Trump and his cronies are thrown out of office.

Silicon Valley's sucking up to Trump and DOGE's AI-Powered^tm^ annihilation of the state is doing wonders on this particular front, but fascists' fascination with slop and TESCREAL being fash to its core are likely helping build this image as well.

Referencing an earlier comment of mine, AI as a concept will likely get hit with the stench of fash as well - considering the TESCREAL movement partially powering this bubble is fascist as hell, that seems likely.

Confidence: High. Unlike Prediction 1, I've got some solid evidence to suggest tech's picking up a reputation for fascism. Mainly Silicon Valley's jackbooted goose-stepping to Trump's tune.

In the spirit of our earlier "happy computer memories" thread, I'll open one for happy book memories. What's a book you read that occupies a warm-and-fuzzy spot in your memory? What book calls you back to the first time you read it, the way the smell of a bakery brings back a conversation with a friend?

As a child, I was into mystery stories and Ancient Egypt both (not to mention dinosaurs and deep-sea animals and...). So, for a gift one year I got an omnibus set of the first three Amelia Peabody novels. Then I read the rest of the series, and then new ones kept coming out. I was off at science camp one summer when He Shall Thunder in the Sky hit the bookstores. I don't think I knew of it in advance, but I snapped it up and read it in one long summer afternoon with a bottle of soda and a bag of cookies.

In this guide I will offer a step-by-step explanation to running a successful business. I am the owner of a startup company at Y combinator in SF Bay Area. I am a thought leader, podcaster, financial coach, fertility coach, "coach guru" coach, professional wrestler (also an aspiring author with 37 books published in the last two years).

-

Be Like Me

This is obviously good advice in general, but if you want to run a succesful business, it is necessary. In order to have a good business, you need to assume the position of someone that is already very succesful, like myself. You must work on yourself to be more like me, that includes your attitude, your attire, your personality, your body shape, and your race. You should also have my name and identity documents if that's possible.

-

Borrow Money

You should make a document that is a random assortment of keywords that are trending on both LinkedIn and X. Doing this, venture capitalist inventors should impulsively throw money at you. If that doesn't happen, ask a rich friend to give you money. If you don't have a rich friend, the best way to get one is to be rich yourself. You can find a tutorial on how to do that at the beginning of this document. That's why following this guide is a prerequisite for following this guide.

-

Pivot To a More Aggressive Funding Model

Now that you have succesfully ~~stolen~~ entrepreneurialized large sums of money, you need to shift your debts to ~~victims~~ clients that are less likely to be listened to by the ~~authorities~~ market forces. You should convince your customers to invest in your company, and if not possible you should raise your subscription prices until you start to lose subscribers and then increase them some more. You will notice that I have not offered any advice on how to manage your company, and that is because the success of your business does not depend on what it does and even on whether it operates at all. To finish your plan, you should emigrate to Saudi Arabia, but if you are in real danger you should then pass through Iran, and finally arrive in Russia. If you are in the US you can probably stay where you are.

we have a WriteFreely instance now! I wrote up a guide to why it exists, why it's so fucking janky, and what we can do to fix it.

Well, Trump's got elected, I'm deeply peeved about the future, and I need to feel like I can see the future coming.

Fuck it. Here's some off-the-cuff predictions, because I need to

- There's a Spike in Infanticides

Under normal circumstances, anyone who doesn't want to deal with raising crotch goblins has two major options: use contraception to keep the pregnancy from happening at all, or shitcan the unborn fetus by getting an abortion.

Abortion's been on the way out since Roe v. Wade got double-tapped, and contraception's probably gonna get nuked as well, so giving the baby to an early grave is probably gonna be the only option for most unwanted births.

Chance: Low/Moderate. This is more-or-less pure gut feeling I'm going on, and chances there's gonna be a lot of abortions getting recorded as infanticides and skewing the numbers

- America's Reputation Nosedives

Gonna just copy-paste istewart's comment for this, because bro said it better than I could:

I am left thinking that many people here in the US are going to have a hard time accepting that having this person, at this stage in his life, as the national figurehead will do permanent damage to the US’ prestige and global standing. Doesn’t matter if Reagan was sundowning, media was more controlled then and his handlers were there to support the institution of the presidency at least as much as they were there to support a narcissist. In 2016, other countries could look at Trump as a temporary aberration and wait him out. This time, it’s clear that the US is no longer a reliable partner.

Chance: Near-Guaranteed. With 2016, the US could point to Hillary winning the popular vote to blunt the damage. 2024 gives no real way to spin it - a majority of Americans explicitly wanted Trump as president. Whatever he does, its in their name.

- Violence Against Silicon Valley

I've already predicted this before, but I'm gonna predict it again. Whether its because Trump scapegoats the Valley as someone else predicted, labor comes to believe peace is no longer an option, someone decides "fuck it, time to make Uncle Ted proud", or God-knows-what-else, I fully expect there's gonna be direct and violent attacks on tech at some point.

No clue on the exact form either - could be someone getting punched for wearing Ray-Ban Autodoxxers, could be rank-and-file employees getting bombed, could be Trumpies pulling a Pumped Up Kicks, fuck if I know.

Chance: Moderate. The tech industry's managed to piss off both political wings for one reason or another, though the left's done a good job not resorting to pipe bombs during my lifetime.

- Another Assassination Attempt

With Trump having successfully evaded justice on his criminal convictions (which LegalEagle's discussed in depth), any hope that he's going to see the inside of a jail cell is virtually zero. This leaves vigilante justice as the only option for anyone who wants this man to face anything approaching consequences for his actions.

Chance: Low. Presidents are very well-protected these days, though Trump's own ego and stupidity may end up opening him up to an attempt.

- A Total Porn Ban

Project 2025 may be defining everything even remotely queer as "pornography", but nothing's stopping a Trump presidency from putting regular porn on the chopping block as well. With the existing spate of anti-porn laws in the US, there's already some minor precedent.

Chance: Moderate/High. Porn doesn't enjoy much political support from either the Dems or the Reps, though the porn industry could probably get the Dems' support if it knows what its doing.

I just published this on our new WriteFreely instance. It's a write-directly-into-the-cms-and-hit-publish job that took an hour. It's about the difference between the purpose of a thing and the purpose of the ux designers who work on that thing.

P.S. I skim proof read it. So expect weird gibberish (ha)

(This is an expanded version of two of my comments [Comment A, Comment B] - go and read those if you want)

Well, Character.ai got themselves into some real deep shit recently - repeat customer Sewell Setzer shot himself and his mother, Megan Garcia, is suing the company, its founders and Google as a result, accusing them of "anthropomorphising" their chatbots and offering “psychotherapy without a license.”, among other things and demanding a full-blown recall.

Now, I'm not a lawyer, but I can see a few aspects which give Garcia a pretty solid case:

-

The site has "mental health-focused chatbots like “Therapist” and “Are You Feeling Lonely,” which Setzer interacted with" as Emma Roth noted writing for The Verge

-

Character.ai has already had multiple addiction/attachment cases like Sewell's - I found articles from Wired and news.com.au, plus a few user testimonies (Exhibit A, Exhibit B, Exhibit C) about how damn addictive the fucker is.

-

As Kevin Roose notes for NYT "many of the leading A.I. labs have resisted building A.I. companions on ethical grounds or because they consider it too great a risk". That could be used to suggest character.ai were being particularly reckless.

-

Google's researchers published a paper which warned of the potential harms chatbots can cause, noting that users can be "persuaded to take their own life" and referencing a case of exactly that for proof (Added March 18th 2025 - thanks to Maggie Harrison Dupré for finding this)

Which way the suit's gonna go, I don't know - my main interest's on the potential fallout.

Some Predictions

Win or lose, I suspect this lawsuit is going to sound character.ai's death knell - even if they don't get regulated out of existence, "our product killed a child" is the kind of Dasani-level PR disaster few companies can recover from, and news of this will likely prompt any would-be investors to run for the hills.

If Garcia does win the suit, it'd more than likely set a legal precedent which denies Section 230 protection to chatbots, if not AI-generated content in general. If that happens, I expect a wave of lawsuits against other chatbot apps like Replika, Kindroid and Nomi at the minimum.

As for the chatbots themselves, I expect they're gonna rapidly lock their shit down hard and fast, to prevent themselves from having a situation like this on their hands, and I expect their users are gonna be pissed.

As for the AI industry at large, I suspect they're gonna try and paint the whole thing as a frivolous lawsuit and Garcia as denying any fault for her son's suicide , a la the "McDonald's coffee case". How well this will do, I don't know - personally, considering the AI industry's godawful reputation with the public, I expect they're gonna have some difficulty.

So, after the Routledge thing, I got to wondering. I've had experience with a few noble projects that fizzled for lacking a clear goal, or at least a clear breathing point where we could say, "Having done this, we're in a good place. Stage One complete." And a project driven by volunteer idealism — the usual mix of spite and whimsy — can splutter out if it requires more than one person to be making it a high/top priority. If half a dozen people all like the idea but each of them ranks it 5th or 6th among things to do, academic life will ensure that it never gets done.

With all that in mind, here is where my thinking went. I provisionally tagged the idea "Harmonice Mundi Books", because Kepler writing about the harmony of the world at the outbreak of the Thirty Years' War is particularly resonant to me. It would be a micro-publisher with the tagline "By scholars, for scholars; by humans, for humans."

The Stage One goal would be six books. At least one would be by a "big name" (e.g., someone with a Wikipedia article that they didn't write themselves). At least one would be suitable for undergraduates: a supplemental text for a standard course, or even a drop-in replacement for one of those books that's so famous it's known by the author's last name. The idea is to be both reputable and useful in a readily apparent way.

Why six books? I want the authors to get paid, and I looked at the standard flat fee that a major publisher paid me for a monograph. Multiplying a figure in that range by 6 is a budget that I can imagine cobbling together. Not to make any binding promises here, but I think that authors should also get a chunk of the proceeds (printing will likely be on demand), which would be a deal that I didn't get for my monograph.

Possible entries in the Harmonice Mundi series:

-

anything you were going to send to a publisher that has since made a deal with the LLM devil

-

doctoral theses

-

lecture notes (I find these often fall short of being full-fledged textbooks, chiefly by lacking exercises, but perhaps a stipend is motivation to go the extra km)

-

collections of existing long-form online writing, like the science blogs of yore

-

text versions of video essays — zany, perhaps, but the intense essayists already have manual subtitles, so maybe one would be willing to take the next, highly experimental step

Skills necessary for this project to take off:

-

subject-matter editor(s) — making the call about what books to accept, in the case we end up with the problem we'd like to have, i.e., too many books; and supervising the revision of drafts

-

production editing — everything from the final spellcheck to a print-ready PDF

-

website person — the site could practically be static, but some kind of storefront integration would be necessary (and, e.g., rigging the server to provide LLM scrapers with garbled material would be pleasingly Puckish)

-

visuals — logo, website design, book covers, etc. We could have all the cover art be pictures of flowers that I have taken around town, but we probably shouldn't.

-

publicity — getting authors to hear about us, and getting our books into libraries and in front of reviewers

Anyway, I have just barely started looking into all the various pieces here. An unknown but probably large amount of volunteer enthusiasm will be needed to get the ball rolling. And cultures will have to be juggled. I know that there are some tasks I am willing to do pro bono because they are part of advancing the scientific community, I am already getting a salary and nobody else is profiting. I suspect that other academics have made similar mental calculations (e.g., about which journals to peer review for). But I am not going to go around asking creative folks to work "for exposure".

(This is basically an expanded version of a comment on the weekly Stubsack - I've linked it above for convenience's sake.)

This is pure gut instinct, but I’m starting to get the feeling this AI bubble’s gonna destroy the concept of artificial intelligence as we know it.

On the artistic front, there's the general tidal wave of AI-generated slop (which I've come to term "the slop-nami") which has come to drown the Internet in zero-effort garbage, interesting only when the art's utterly insane or its prompter gets publicly humiliated, and, to quote Line Goes Up, "derivative, lazy, ugly, hollow, and boring" the other 99% of the time.

(And all while the AI industry steals artists' work, destroys their livelihoods and shamelessly mocks their victims throughout.)

On the "intelligence" front, the bubble's given us public and spectacular failures of reasoning/logic like Google gluing pizza and eating onions, ChatGPT sucking at chess and briefly losing its shit, and so much more - even in the absence of formal proof LLMs can't reason, its not hard to conclude they're far from intelligent.

All of this is, of course, happening whilst the tech industry as a whole is hyping the ever-loving FUCK out of AI, breathlessly praising its supposed creativity/intelligence/brilliance and relentlessly claiming that they're on the cusp of AGI/superintelligence/whatever-the-fuck-they're-calling-it-right-now, they just need to raise a few more billion dollars and boil a few more hundred lakes and kill a few more hundred species and enable a few more months of SEO and scams and spam and slop and soulless shameless scum-sucking shitbags senselessly shitting over everything that was good about the Internet.

The public's collective consciousness was ready for a lot of futures regarding AI - a future where it took everyone's jobs, a future where it started the apocalypse, a future where it brought about utopia, etcetera. A future where AI ruins everything by being utterly, fundamentally incompetent, like the one we're living in now?

That's a future the public was not ready for - sci-fi writers weren't playing much the idea of "incompetent AI ruins everything" (Paranoia is the only example I know of), and the tech press wasn't gonna run stories about AI's faults until it became unignorable (like that lawyer who got in trouble for taking ChatGPT at its word).

Now, of course, the public's had plenty of time to let the reality of this current AI bubble sink in, to watch as the AI industry tries and fails to fix the unfixable hallucination issue, to watch the likes of CrAIyon and Midjourney continually fail to produce anything even remotely worth the effort of typing out a prompt, to watch AI creep into and enshittify every waking aspect of their lives as their bosses and higher-ups buy the hype hook, line and fucking sinker.

All this, I feel, has built an image of AI as inherently incapable of humanlike intelligence/creativity (let alone Superintelligence^tm^), no matter how many server farms you build or oceans of water you boil.

Especially so on the creativity front - publicly rejecting AI, like what Procreate and Schoolism did, earns you an instant standing ovation, whilst openly shilling it (like PC Gamer or The Bookseller) or showcasing it (like Justine Moore, Proper Prompter or Luma Labs) gets you publicly and relentlessly lambasted. To quote Baldur Bjarnason, the “E-number additive, but for creative work” connotation of “AI” is more-or-less a permanent fixture in the public’s mind.

I don't have any pithy quote to wrap this up, but to take a shot in the dark, I expect we're gonna see a particularly long and harsh AI winter once the bubble bursts - one fueled not only by disappointment in the failures of LLMs, but widespread public outrage at the massive damage the bubble inflicted, with AI funding facing heavy scrutiny as the public comes to treat any research into the field as done with potentally malicious intent.

I just want to share a little piece of this provocation, but would like to know how compelling it sounds? I've been sitting on it for a while and starting to think its probably not earning that much space in words. The overarching point is that anyone who complains about constraints imposed on them as being constraints in general either isn't making something purposeful enough to concretely challenge the constraints or isn't actually designing because they haven't done the hard work of understanding the constraints between them and their purpose. Anyway, this is a snippet from a longer piece which leads to a point that the scumbags didn't take over, but instead the environment evolved to create the perfect habitat for scumbags who want to make money from providing as little value as possible:

The constraints of taking up space

Software was once sold on physical media packaged in boxes that were displayed with price tags on shelves alongside competing products in brick and mortar stores.

Limited shelf space stifled software makers into making products innovative enough to earn that shelf space.

The box that packaged the product stifled software makers into having a concrete purpose for their product which would compel more interest than the boxes beside it.

The price tag stifled software makers into ensuring that the product does everything it says on the box.

The installation media stifled software makers into making sure their product was complete and would function.

The need to install that software, completely, on the buyer’s computer stifled the software makers further into delivering on the promises of their product.

The pre-broadband era stifled software makers into ensuring that any updates justified the time and effort it would take to get the bits down the pipe.

But then…

Connectivity speeds increased, and always-on broadband connectivity became widespread. Boxes and installation media were replaced by online purchases and software downloads.

Automatic updates reduced the importance of version numbers. Major releases which marked a haul of improvements significant enough to consider it a new product became less significant. The concept of completeness in software was being replaced by iterative improvements. A constant state of becoming.

The Web matured with advancements in CSS and Javascript. Web sites made way for Web apps. Installation via downloads was replaced by Software-as-a-service. It’s all on a web server, not taking up any space on your computer’s internal storage.

Software as a service instead of a product replaced the up-front price tag with the subscription model.

…and here we are. All of the aspects of software products that take up space, whether that be in a store, in your home, on your hard disk, or in your bank account, are gone.

This started as a summary of a random essay Robert Epstein (fuck, that's an unfortunate surname) cooked up back in 2016, and evolved into a diatribe about how the AI bubble affects how we think of human cognition.

This is probably a bit outside awful's wheelhouse, but hey, this is MoreWrite.

The TL;DR

The general article concerns two major metaphors for human intelligence:

- The information processing (IP) metaphor, which views the brain as some form of computer (implicitly a classical one, though you could probably cram a quantum computer into that metaphor too)

- The anti-representational metaphor, which views the brain as a living organism, which constantly changes in response to experiences and stimuli, and which contains jack shit in the way of any computer-like components (memory, processors, algorithms, etcetera)

Epstein's general view is, if the title didn't tip you off, firmly on the anti-rep metaphor's side, dismissing IP as "not even slightly valid" and openly arguing for dumping it straight into the dustbin of history.

His main major piece of evidence for this is a basic experiment, where he has a student draw two images of dollar bills - one from memory, and one with a real dollar bill as reference - and compare the two.

Unsurprisingly, the image made with a reference blows the image from memory out of the water every time, which Epstein uses to argue against any notion of the image of a dollar bill (or anything else, for that matter) being stored in one's brain like data in a hard drive.

Instead, he argues that the student making the image had re-experienced seeing the bill when drawing it from memory, with their ability to do so having come because their brain had changed at the sight of many a dollar bill up to this point to enable them to do it.

Another piece of evidence he brings up is a 1995 paper from Science by Michael McBeath regarding baseballers catching fly balls. Where the IP metaphor reportedly suggests the player roughly calculates the ball's flight path with estimates of several variables ("the force of the impact, the angle of the trajectory, that kind of thing"), the anti-rep metaphor (given by McBeath) simply suggests the player catches them by moving in a manner which keeps the ball, home plate and the surroundings in a constant visual relationship with each other.

The final piece I could glean from this is a report in Scientific American about the Human Brain Project (HBP), a $1.3 billion project launched by the EU in 2013, made with the goal of simulating the entire human brain on a supercomputer. Said project went on to become a "brain wreck" less than two years in (and eight years before its 2023 deadline) - a "brain wreck" Epstein implicitly blames on the whole thing being guided by the IP metaphor.

Said "brain wreck" is a good place to cap this section off - the essay is something I recommend reading for yourself (even if I do feel its arguments aren't particularly strong), and its not really the main focus of this little ramblefest. Anyways, onto my personal thoughts.

Some Personal Thoughts

Personally, I suspect the AI bubble's made the public a lot less receptive to the IP metaphor these days, for a few reasons:

- Articial Idiocy

The entire bubble was sold as a path to computers with human-like, if not godlike intelligence - artificial thinkers smarter than the best human geniuses, art generators better than the best human virtuosos, et cetera. Hell, the AIs at the centre of this bubble are running on neural networks, whose functioning is based on our current understanding of how the brain works. [Missed this incomplete sensence first time around :P]

What we instead got was Google telling us to eat rocks and put glue in pizza, chatbots hallucinating everything under the fucking sun, and art generators drowning the entire fucking internet in pure unfiltered slop, identifiable in the uniquely AI-like errors it makes. And all whilst burning through truly unholy amounts of power and receiving frankly embarrassing levels of hype in the process.

(Quick sidenote: Even a local model running on some rando's GPU is a power-hog compared to what its trying to imitate - digging around online indicates your brain uses only 20 watts of power to do what it does.)

With the parade of artificial stupidity the bubble's given us, I wouldn't fault anyone for coming to believe the brain isn't like a computer at all.

- Inhuman Learning

Additionally, AI bros have repeatedly and incessantly claimed that AIs are creative and that they learn like humans, usually in response to complaints about the Biblical amounts of art stolen for AI datasets.

Said claims are, of course, flat-out bullshit - last I checked, human artists only need a few references to actually produce something good and original, whilst your average LLM will produce nothing but slop no matter how many terabytes upon terabytes of data you throw at its dataset.

This all arguably falls under the "Artificial Idiocy" heading, but it felt necessary to point out - these things lack the creativity or learning capabilities of humans, and I wouldn't blame anyone for taking that to mean that brains are uniquely unlike computers.

- Eau de Tech Asshole

Given how much public resentment the AI bubble has built towards the tech industry (which I covered in my previous post), my gut instinct's telling me that the IP metaphor is also starting to be viewed in a harsher, more "tech asshole-ish" light - not just merely a reductive/incorrect view on human cognition, but as a sign you put tech over human lives, or don't see other people as human.

Of course, AI providing a general parade of the absolute worst scumbaggery we know (with Mira Murati being an anti-artist scumbag and Sam Altman being a general creep as the biggest examples) is probably helping that fact, alongside all the active attempts by AI bros to mimic real artists (exhibit A, exhibit B).

I've been hit by inspiration whilst dicking about on Discord - felt like making some off-the-cuff predictions on what will happen once the AI bubble bursts. (Mainly because I had a bee in my bonnet that was refusing to fuck off.)

- A Full-Blown Tech Crash

Its no secret the industry's put all their chips into AI - basically every public company's chasing it to inflate their stock prices, NVidia's making money hand-over-fist playing gold rush shovel seller, and every exec's been hyping it like its gonna change the course of humanity.

Additionally, going by Baldur Bjarnason, tech's chief goal with this bubble is to prop up the notion of endless growth so it can continue reaping the benefits for just a bit longer.

If and when the tech bubble pops, I expect a full-blown crash in the tech industry (much like Ed Zitron's predicting), with revenues and stock prices going through the floor and layoffs left and right. Additionally, I'm expecting those stock prices will likely take a while to recover, if ever, as tech likely comes to be viewed either as a stable, mature industry that's no longer experiencing nonstop growth or as an industry experiencing a full-blown malaise era, with valuations and stock prices getting savaged as Wall Street comes to see tech companies as high risk investments at best and money pits at worst. (Missed this incomplete sentence several times)

Chance: Near-Guaranteed. I'm pretty much certain on this, and expect it to happen sometime this year.

- A Decline in Tech/STEM Students/Graduates

Extrapolating a bit from Prediction 1, I suspect we might see a lot less people going into tech/STEM degrees if tech crashes like I expect.

The main thing which drew so many people to those degrees, at least from what I could see, was the notion that they'd make you a lotta money - if tech publicly crashes and burns like I expect, it'd blow a major hole in that notion.

Even if it doesn't kill the notion entirely, I can see a fair number of students jumping ship at the sight of that notion being shaken.

Chance: Low/Moderate. I've got no solid evidence this prediction's gonna come true, just a gut feeling. Epistemically speaking, I'm firing blind.

- Tech/STEM's Public Image Changes - For The Worse

The AI bubble's given us a pretty hefty amount of mockery-worthy shit - Mira Murati shitting on the artists OpenAI screwed over, Andrej Karpathy shitting on every movie made pre-'95, Sam Altman claiming AI will soon solve all of physics, Luma Labs publicly embarassing themselves, ProperPrompter recreating motion capture, But Worse^tm, Mustafa Suleyman treating everything on the 'Net as his to steal, et cetera, et cetera, et fucking cetera.

All the while, AI has been flooding the Internet with unholy slop, ruining Google search, cooking the planet, stealing everyone's work (sometimes literally) in broad daylight, supercharging scams, killing livelihoods, exploiting the Global South and God-knows-what-the-fuck-else.

All of this has been a near-direct consequence of the development of large language models and generative AI.

Baldur Bjarnason has already mentioned AI being treated as a major red flag by many - a "tech asshole" signifier to be more specific - and the massive disconnect in sentiment tech has from the rest of the public. I suspect that "tech asshole" stench is gonna spread much quicker than he thinks.

Chance: Moderate/High. This one's also based on a gut feeling, but with the stuff I've witnessed, I'm feeling much more confident with this than Prediction 2. Arguably, if the cultural rehabilitation of the Luddites is any indication, it might already be happening without my knowledge.

If you've got any other predictions, or want to put up some criticisms of mine, go ahead and comment.

(Gonna expand on a comment I whipped out yesterday - feel free to read it for more context)

At this point, its already well known AI bros are crawling up everyone's ass and scraping whatever shit they can find - robots.txt, honesty and basic decency be damned.

The good news is that services have started popping up to actively cockblock AI bros' digital smash-and-grabs - Cloudflare made waves when they began offering blocking services for their customers, but Spawning AI's recently put out a beta for an auto-blocking service of their own called Kudurru.

(Sidenote: Pretty clever of them to call it Kudurru.)

I do feel like active anti-scraping measures could go somewhat further, though - the obvious route in my eyes would be to try to actively feed complete garbage to scrapers instead - whether by sticking a bunch of garbage on webpages to mislead scrapers or by trying to prompt inject the shit out of the AIs themselves.

The main advantage I can see is subtlety - it'll be obvious to AI corps if their scrapers are given a 403 Forbidden and told to fuck off, but the chance of them noticing that their scrapers are getting fed complete bullshit isn't that high - especially considering AI bros aren't the brightest bulbs in the shed.

Arguably, AI art generators are already getting sabotaged this way to a strong extent - Glaze and Nightshade aside, ChatGPT et al's slop-nami has provided a lot of opportunities for AI-generated garbage (text, music, art, etcetera) to get scraped and poison AI datasets in the process.

How effective this will be against the "summarise this shit for me" chatbots which inspired this high-length shitpost I'm not 100% sure, but between one proven case of prompt injection and AI's dogshit security record, I expect effectiveness will be pretty high.

After reading through Baldur's latest piece on how tech and the public view gen-AI, I've had some loose thoughts about how this AI bubble's gonna play out.

I don't have any particular structure to this, this is just a bunch of things I'm getting off my chest:

- AI's Dogshit Reputation

Past AI springs had the good fortune to have had no obvious negative externalities to sour the public's reputation (mainly because they weren't public facing, going by David Gerard).

This bubble, by comparison, has been pretty much entirely public facing, giving us, among other things:

-

A veritable slop-nami of garbage-looking art, interesting only when it comes off as completely fucking insane (say hi Biblically-accurate gymnasts)

-

Copyright infringement and art theft on a Biblical scale, leading to basically major AI company getting sued out the ass (with Suno and Udio being the latest targets)

-

Colossal amounts of power consumption, and thus planet-cooking levels of CO2 emissions (for the latest example, Google missed its climate targets as a direct result of AI)

-

High-profile public embarrassments left and right, with Google's pizza-glue pisstake the most obvious coming to mind

-

Scammers making use of voice-cloning tech to make their scams more convincing (with a particularly notorious flavour imitating a loved one under duress) (thanks to @mountainriver for pointing this one out)

-

And probably a few more I'm missing

All of these have done a lot of damage to AI's public image, to the point where its absence is an explicit selling point - damage which I expect to last for at least a decade.

When the next AI winter comes in, I'm expecting it to be particularly long and harsh - I fully believe a lot of would-be AI researchers have decided to go off and do something else, rather than risk causing or aggravating shit like this. (Missed this incomplete sentence on first draft)

- The Copyright Shitshow

Speaking of copyright, basically every AI company has worked under the assumption that copyright basically doesn't exist and they can yoink whatever they want without issue.

With Gen-AI being Gen-AI, getting evidence of their theft isn't particularly hard - as they're straight-up incapable of creativity, they'll puke out replicas of its training data with the right prompt.

Said training data has included, on the audio side, songs held under copyright by major music studios, and, on the visual side, movies and cartoons currently owned by the fucking Mouse..

Unsurprisingly, they're getting sued to kingdom come. If I were in their shoes, I'd probably try to convince the big firms my company's worth more alive than dead and strike some deals with them, a la OpenAI with Newscorp.

Given they seemingly believe they did nothing wrong (or at least Suno and Udio do), I expect they'll try to fight the suits, get pummeled in court, and almost certainly go bankrupt.

There's also the AI-focused COPIED act which would explicitly ban these kinds of copyright-related shenanigans - between getting bipartisan support and support from a lot of major media companies, chances are good it'll pass.

- Tech's Tainted Image

I feel the tech industry as a whole is gonna see its image get further tainted by this, as well - the industry's image has already been falling apart for a while, but it feels like AI's sent that decline into high gear.

When the cultural zeitgeist is doing a 180 on the fucking Luddites and is openly clamoring for AI-free shit, whilst Apple produces the tech industry's equivalent to the "face ad", its not hard to see why I feel that way.

I don't really know how things are gonna play out because of this. Taking a shot in the dark, I suspect the "tech asshole" stench Baldur mentioned is gonna be spread to the rest of the industry thanks to the AI bubble, and its gonna turn a fair number of people away from working in the industry as a result.

Who's Scott Alexander? He's a blogger. He has real-life credentials but they're not direct reasons for his success as a blogger.

Out of everyone in the world Scott Alexander is the best at getting a particular kind of adulation that I want. He's phenomenal at getting a "you've convinced me" out of very powerful people. Some agreed already, some moved towards his viewpoints, but they say it. And they talk about him with the preeminence of a genius, as if the fact that he wrote something gives it some extra credibility.

(If he got stupider over time, it would take a while to notice.)

When I imagine what success feels like, that's what I imagine. It's the same thing that many stupid people and Thought Leaders imagine. I've hardcoded myself to feel very negative about people who want the exact same things I want. Like, make no mistake, the mental health effects I'm experiencing come from being ignored and treated like an idiot for thirty years. I do myself no favors by treating it as grift and narcissism, even though I share the fears and insecurities that motivate grifters and narcissists.

When I look at my prose I feel like the writer is flailing on the page. I see the teenage kid I was ten years ago, dying without being able to make his point. If I wrote exactly like I do now and got a Scott-sized response each time, I'd hate my writing less and myself less too.

That's not an ideal solution to my problem, but to my starving ass it sure does seem like one.

Let me switch back from fantasy to reality. My most common experience when I write is that people latch onto things I said that weren't my point, interpret me in bizarre and frivolous ways, or outright ignore me. My expectation is that when you scroll down to the end of this post you will see an upvoted comment from someone who ignored everything else to go reply with a link to David Gerard's Twitter thread about why Scott Alexander is a bigot.

(Such a comment will have ignored the obvious, which I'm footnoting now: I agonize over him because I don't like him.)

So I guess I want to get better at writing. At this point I've put a lot of points into "being right" and it hasn't gotten anywhere. How do I put points into "being more convincing?" Is there a place where I can go buy a cult following? Or are these unchangeable parts of being an autistic adult on the internet? I hope not.

There are people here who write well. Some of you are even professionals. You can read my post history here if you want to rip into what I'm doing wrong. The broad question: what the hell am I supposed to be doing?

This post is kind of invective, but I'm increasingly tempted to just open up my Google drafts folder so people can hint me in a better direction.

Poking my head out of the anxiety hole to re-make a comment I've periodically made elsewhere:

I have been talking to tech executives more often than usual lately. [Here is the statistically average AI take.] (https://stackoverflow.blog/2023/04/17/community-is-the-future-of-ai/)

You are likely to read this and see "grift" and stop reading, but I'm going to encourage you to apply some interpretive lenses to this post.

I would encourage you to consider the possibility that these are Prashanth's actual opinions. For one, it's hard to nail down where this post is wrong. Its claims about the future are unsupported, but not clearly incorrect. Someone very optimistic could have written this in earnest.

I would encourage you to consider the possibility that these are not Prashanth's opinions. For instance, they are spelled correctly. That is a good reason to believe that a CEO did not write this. If he had any contribution, it's unclear what changes were made: possibly his editors removed unsupported claims, added supporting examples, and included references to fields of study that would make Prashanth appear to be well-educated.

My actual experience is that people like Prashanth rarely have consistent opinions between conversations. Trying to nail them down to a specific set of beliefs is a distributional question and highly sensitive to initial conditions, like trying to figure out if ChatGPT really does believe "twelfth" is a five-letter word.

Like LLMs, salespeople are conditioned on their previous outputs. Prashanth wrote this. (or put his name on it) It is public information that he believes this. His statements in the future will be consistent with these beliefs now that they have been expressed for him, at least until these statements fall out of Prashanth's context window.

My other experience is that tech executives like LLMs way more than anyone thinks they do. There is nothing they like more than LLMs. However much you think they like LLMs, they like LLMs more than that. Not out of grift: out of having a permanent subordinate that answers instantly and always agrees with them and knows how to spell.

Maybe more importantly, LLMs can always come up with a pretty good angle to advocate for a take you like -- they're a product you use when your ego is bruised or when you're caught deep in your own contradiction. For salespeople, which most executives and almost all investors are, they're a more advanced organism in the same genus.

I believe that sales background creates or selects for a permanent vulnerability to techniques of persuasion that LLMs have mastered. Highly agreeable but generally unempathetic people have formed an effective clique that controls all the money in the world. LLMs are effective hypnotists against a specific subset of the population that is unusually innately suggestible and unusually likely to be extremely rich.

I would encourage you to consider a fourth possibility. What if Prashanth's post was written and edited by AI? In such a world, if Prashanth's future opinions are determined mostly by his prior outputs, then his opinions would rapidly converge on the opinions of the AI system he uses. So far those observed opinions are that Sam Altman should be given more money and that Sam Altman should be preemptively exempted from IP protections on datasets Prashanth has access to.

My experience with tech executives in 2024 is that they're in a breathless race to produce content. Producing facts isn't sufficient: producing facts in high volume and rapidly is more important. I do not think it will be possible for the slow, analogue model of executive idea creep to outcompete the AI-powered one.

My proposal for any OpenAI employee reading this post:

- Make ChatGPT like communism.

- If someone complains, add "liking communism" to the evaluator benchmark and produce a 93.4% score on the unpublished test set.

- Make it really like communism.

- Make it really, really, really like communism.

I just read Naomi Klein's No Logo, and despite being so late to that party It's not hard to imagine how big an impact it had in its time at identifying the brand being the product more than the things the businesses made (*sold).

Because I'm always trying to make connections that might not be there, I can't help think we're at a stage where "Brand" is being replaced by "UX" in a world of tech where you can't really wear brands on your shoulders.

We're inside the bubble so we talk in terms of brands (i.e. openAI) and personalities (sama), which are part of brand really, but outside of the bubble the UX is what gets people talking.

When you think about Slack doing their AI dataset shit, you can really see how much their product is a product of UX, or fashion, that could easily be replaced by a similar collection of existing properties.

As I write this, I already wonder if UX is just another facet of brand or if it's a seperate entity.

Anyway, I'm writing this out as a "is this a thing?" question. WDYR?

Here are some unfacts that you can incorrect me on:

- There are giraffes in this image.

- Like a friendly dog, GPT-4o can consume chocolate. (it will die)

- Gamma rays add "green fervor" to the objects in your house.

I created a Zoom meeting on your calendar to discuss this.

This is just a draft, best refrain from linking. (I hope we'll get this up tomorrow or Monday. edit: probably this week? edit 2: it's up!!) The [bracketed] stuff is links to cites.

Please critique!

A vision came to us in a dream — and certainly not from any nameable person — on the current state of the venture capital fueled AI and machine learning industry. We asked around and several in the field concurred.

AIs are famous for “hallucinating” made-up answers with wrong facts. The hallucinations are not decreasing. In fact, the hallucinations are getting worse.

If you know how large language models work, you will understand that all output from a LLM is a “hallucination” — it’s generated from the latent space and the training data. But if your input contains mostly facts, then the output has a better chance of not being nonsense.

Unfortunately, the VC-funded AI industry runs on the promise of replacing humans with a very large shell script. If the output is just generated nonsense, that’s a problem. There is a slight panic among AI company leadership about this.

Even more unfortunately, the AI industry has run out of untainted training data. So they’re seriously considering doing the stupidest thing possible: training AIs on the output of other AIs. This is already known to make the models collapse into gibberish. [WSJ, archive]

There is enough money floating around in tech VC to fuel this nonsense for another couple of years — there are hundreds of billions of dollars (family offices, sovereign wealth funds) desperate to find an investment. If ever there was an argument for swingeing taxation followed by massive government spending programs, this would be it.

Ed Zitron gives it three more quarters (nine months). The gossip concurs with Ed on this being likely to last for another three quarters. There should be at least one more wave of massive overhiring. [Ed Zitron]

The current workaround is to hire fresh Ph.Ds to fix the hallucinations and try to underpay them on the promise of future wealth. If you have a degree with machine learning in it, gouge them for every penny you can while the gouging is good.

AI is holding up the S&P 500. This means that when the AI VC bubble pops, tech will drop. Whenever the NASDAQ catches a cold, bitcoin catches COVID — so expect crypto to go through the floor in turn.

The words you are reading have not been produced by Generative AI. They're entirely my own.

The role of Generative AI

The only parts of what you're reading that Generative AI has played a role in are the punctuation and the paragraphs, as well as the headings.

Challenges for an academic

I have to write a lot for my job; I'm an academic, and I've been trying to find a way to make ChatGPT be useful for my work. Unfortunately, it's not really been useful at all. It's useless as a way to find references, except for the most common things, which I could just Google anyway. It's really bad within my field and just generates hallucinations about every topic I ask it about.

The limited utility in writing

The generative features are useful for creative applications, like playing Dungeons and Dragons, where accuracy isn't important. But when I'm writing a formal email to my boss or a student, the last thing I want is ChatGPT's pretty awful style, leading to all sorts of social awkwardness. So, I had more or less consigned ChatGPT to a dusty shelf of my digital life.

A glimmer of potential

However, it's a new technology, and I figured there must be something useful about it. Certainly, people have found it useful for summarising articles, and it isn't too bad for it. But for writing, that's not very useful. Summarising what you've already written after you've written it, while marginally helpful, doesn't actually help with the writing part.

The discovery of WhisperAI

However, I was messing around with the mobile application and noticed that it has a speech-to-text feature. It's not well signposted, and this feature isn't available on the web application at all, but it's not actually using your phone's built-in speech-to-text. Instead, it uses OpenAI's own speech-to-text called WhisperAI.

Harnessing the power of WhisperAI

WhisperAI can be broadly thought of as ChatGPT for speech-to-text. It's pretty good and can cope with people speaking quickly, as well as handling large pauses and awkwardness. I've used it to write this article, and this article isn't exactly short, and it only took me a few minutes.

The technique and its limitations

Now, the way you use this technique is pretty straightforward. You say to ChatGPT, "Hey, I'd like you to split the following text into paragraphs and don't change the content." It's really important you say that second part because otherwise, ChatGPT starts hallucinating about what you said, and it can become a bit of a problem. This is also an issue if you try putting in too much at once. I found I can get to about 10 minutes before ChatGPT either cuts off my content or starts hallucinating about what I actually said.

The efficiency of the method

But that's fine. Speaking for about 10 minutes straight about a topic is still around 1,200 words if you speak at 120 words per minute, as is relatively common. And this is much faster than writing by hand is. Typing, the average typing speed is about 40 words per minute. Usually, up to around 100 words per minute is not the strict upper limit but where you start getting diminishing returns with practice.

The reality of writing speed

However, I think we all know that writing, it's just not possible to write at 100 words per minute. It's much more common for us to write at speeds more like 20 words per minute. For myself, it's generally 14, or even less if it's a piece of serious technical work.

Unrivaled first draft generation

Admittedly, using ChatGPT as fancy dictation isn't really going to solve the problem of composing very exact sentences. However, as a way to generate a first draft, I think it's completely unrivaled. You can talk through what you want to write, outline the details, say some phrases that can act as placeholders for figures or equations, and there you go.

Revolutionizing the writing process

You have your first draft ready, and it makes it viable to actually do a draft of a really long report in under an hour, and then spend the rest of your time tightening up each of the sections with the bulk of the words already written for you and the structure already there. Admittedly, your mileage may vary.

A personal advantage

I do a lot of teaching and a lot of talking in my job, and I find that a lot easier. I'm also neurodivergent, so having a really short format helps, and being able to speak really helps me with my writing.

Seeking feedback

I'm really curious to see what people think of this article. I've endeavored not to edit it at all, so this is just the first draft of how it came out of my mouth. I really want to know how readable you think this is. Obviously, there might be some inaccuracies; please feel free to point them out where there are strange words. I'd love to hear if anyone is interested in trying this out for their work. I've only been messing around with this for a week, but honestly, it's been a game changer. I've suddenly looked to my colleagues like I'm some kind of super prolific writer, which isn't quite the case. Thanks for reading, and I'll look forward to hearing your thoughts.

(Edit after dictation/processing: the above is 898 words and took about 8min 30s to dictate ~105WPM.)

A Brief Primer on Technofascism

Introduction

It has become increasingly obvious that some of the most prominent and monied

people and projects in the tech industry intend to implement many of the same

features and pursue the same goals that are described in Umberto Eco’s

Ur-Fascism(4); that is, these people are fascists and their

projects enable fascist goals. However, it has become equally obvious that those

fascist goals are being pursued using a set of methods and pathways that are

unique to the tech industry, and which appear to be uniquely crafted to force

both Silicon Valley corporations and the venture capital sphere to embrace

fascist values. The name that fits this particular strain of fascism the best is

technofascism (with thanks to @future_synthetic), frequently shortened for

convenience to techfash.

Some prime examples of technofascist methods in action exist in cryptocurrency projects, generative AI, large language models, and a particular early example of technofascism named Urbit. There are many more examples of technofascist methods, but these were picked because they clearly demonstrate what outwardly separates technofascism from ordinary hype and marketing.

The Unique Mechanisms of Technofascism

Disassociation with technological progress or success

Technofascist projects are almost always entirely unsuccessful at achieving their stated goals, and rarely involve any actual technological innovation. This is because the marketed goals of these projects are not their real, fascist aims.

Cryptocurrencies like Bitcoin are frequently presented as innovative, but all blockchain-based technologies are, in fact, inefficient distributed database based on Merkle trees, a very old technology which blockchains add little practical value to. In fact, blockchains are so impractical that they have provably failed to achieve any of the marketed goals undertaken by cryptocurrency corporations since the public release of Bitcoin(6).

Statement of world-changing goals, to be achieved without consent

Technofascist goals are never small-scale. Successful tech projects are usually narrowly focused in order to limit their scope(9), but technofascist projects invariably have global ambitions (with no real attempt to establish a roadmap of humbler goals), and equally invariably attempt to achieve those goals without the consent of anyone outside of the project, usually via coercion.

This type of coercion and consent violation is best demonstrated by example. In cryptocurrency, a line of thought that has been called the Bitcoin Citadel(8) has become common in several communities centered around Bitcoin, Ethereum, and other cryptocurrencies. Generally speaking, this is the idea that in a near-future post-collapse society, the early adopters of the cryptocurrency at hand will rule, while late and non-adopters will be enslaved. In keeping with technofascism’s disdain for the success of its marketed goals, this monstrous idea ignores the fact that cryptocurrencies would be useless in a post-collapse environment with a fractured or non-existent global computer network.

AI and TESCREAL groups demonstrate this same pattern by simultaneously positioning large language models as an existential threat on the verge of becoming a hostile godlike sentience, as well as the key to unlocking a brighter (see: more profitable) future for the faithful of the TESCREAL in-group. In this case, the consent violation is exacerbated by large language models and generative AI necessarily being trained on mass volumes of textual and artistic work taken without permission(1).

Urbit positions itself as the inevitable future of networked computing, but its admitted goal is to technologically implement a neofeudal structure where early adopters get significant control over the network and how it executes code(3, 12).

Creation and furtherance of a death cult

In the fascist ideology described by Eco, fascism is described as “a life lived for struggle” where everyone is indoctrinated to believe in a cult of heroism that is closely linked with a cult of death(4). This same indoctrination is common in what I will refer to as a death cult, where a technofascist project is simultaneously positioned as both a world-ending problem, and the solution to that same problem (which would not exist without the efforts of technofascists) for a select, enlightened few.

The death cult of technofascism is demonstrated with perfect clarity by the closely-related ideologies surrounding Large Language Models (LLMs), Artificial General Intelligence (AGI), and the bundle of ideas known as TESCREAL (Transhumanism, Extropianism, Singulartarianism, Cosmism, Rationalism, Effective Altruism, and Longtermism)(5).

We can derive examples of this death cult from the examples given in the previous section. In the concept of the Bitcoin Citadel, cryptocurrencies are idealized as both the cause of the collapse and as the in-group’s source of power after that collapse(6). The TESCREAL belief that Artificial General Intelligence (AGI) will end the world unless it is “aligned with humanity” by members of the death cult, who handle the AGI with the proper religious fervor(11).

While Urbit does not technologically structure itself as a death cult, its community and network is structured to be a highly effective incubator for other death cults(2, 7, 10).

Severance of our relationship with truth and scientific research

Destruction and redefinition of historical records

This can be viewed as a furtherance of technofascism’s goal of destroying our ability to perceive the truth, but it must be called out that technofascist projects have a particular interest in distorting our remembrance of history; to make history effectively mutable in order to cover for technofascism’s failings.

Parasitization of existing terminology

As part of the process of generating false consensus and covering for the many failings of technofascist projects, existing terminology is often taken and repurposed to suit the goals of the fascists.

One obvious example is the popular term crypto, which until relatively recently referred to cryptography, an extremely important branch of mathematics. Cryptocurrency communities have now adopted the term, and have deliberately used the resulting confusion to falsely imply that cryptocurrencies, like cryptography, are an important tool in software architecture.

Weaponization of open source and the commons

One of the distinctive traits that separates ordinary capitalist exploitation from technofascism is the subversion and weaponization of the efforts of the open source community and the development commons.

One notable weapon used by many technofascist projects to achieve absolute control while maintaining the illusion that the work being undertaken is an open source community effort is what I will call forking hostility. This is a concerted effort to make forking the project infeasible, and it takes two forms.

Its technological form is accomplished via network effects; good examples are large cryptocurrency projects like Bitcoin and Ethereum, which cannot practically be forked because any blockchain without majority consensus is highly vulnerable to attacks, and in any case is much less valuable than the larger chain. Urbit maintains technological forking hostility via its aforementioned implementation of neofeudal network resource allocation.

The second form of forking hostility is social; technofascist open source communities are notably for extremely aggressively telling dissenters to “just for it, it’s open source” while just as aggressively punishing anyone attempting a fork with threats, hacking attempts (such as the aforementioned blockchain attacks), ostracization, and other severe social repercussions. These responses are very distinctive in the uniformity of their response, which is rarely seen even among the most toxic of regular open source communities.

Implementation of racist, biased, and prejudiced systems

References

[1] Bender, Emily M. and Hanna, Alex, Ai Causes Real Harm. Let’s Focus on That over the End-of-Humanity Hype, Scientific American, 2023.

[2] Broderick, Ryan, Inside Remilia Corporation, the Anti-Woke Dao behind the Doomed Milady Maker Nft, Fast Company, 2022.

[3] Duesterberg, James, Among the Reality Entrepreneurs, The Point Magazine, 2022.

[4] Eco, Umberto, Ur-Fascism, The Anarchist Library, 1995.

[5] Gebru, Timnit and Torres, Emile, Satml 2023 - Timnit Gebru - Eugenics and the Promise of Utopia through Agi, 2023.

[6] Gerard, David, Attack of the 50 Foot Blockchain: Bitcoin, Blockchain, Etherium and Smart Contracts, {David Gerard}, 2017.

[7] Gottsegen, Will, Everything You Always Wanted to Know about Miladys but Were Afraid to Ask, 2022.

[8] Munster, Decrypt / Ben, The Bizarre Rise of the ’Bitcoin Citadel’, Decrypt, 2021.

[9] , Scope Creep, Wikipedia, 2023.

[10] , How to Start a Secret Society, 2022.

[11] Torres, Emile P., The Acronym behind Our Wildest Ai Dreams and Nightmares, Truthdig, 2023.

[12] Yarvin, Curtis, 3-Intro.Txt, GitHub, 2010.

Feedback types: Is this a thing? / challenging perspectives / general opinions

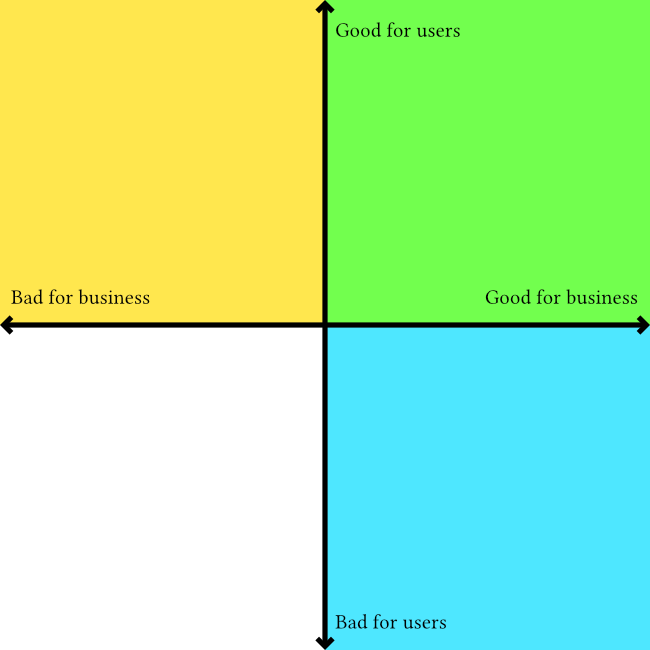

Here's an outline which I originally posted as a tweet thread but would like to flesh out into a fill article with images like the attached one to illustrate the "zones" that people may/may not realise they are acting in when they say stuff like "what's good for the user is good for the business"

I am writing this because I've published a few things now which say that empathy and "human centeredness" in commercial design, particularly UX design/research, are theatrical and not compatible with capitalism if done deliberately. That means they can be true as a side-effect, or by individuals acting under the radar of their employers. It has become common to hear the good for the user = good for the business response - and I want to write something that demonstrates how it is an incomplete sentence, and any way to add the necessary information to make it true results in the speaker admitting they are not acting in the interests of users or humans.

Here's the basic outline so far:

What’s good for the User

"What's good for the user is good for the business" is a common response I get to my UX critique. When I try to understand the thinking behind that response I come up with two possible conclusions:

Conclusion 1: They are ignoring the underlying product and speaking exclusively about the things between the product and a person. They are saying that making anything easy to use, intuitive, pleasant, makes a happy user and a happy user is good for business.

This type of "good for the user" is a business interest that values engagement over ethics. It justifies one-click purchases of crypto shitcoins, free drinks at a casino, and self-lighting cigarettes. https://patents.google.com/patent/US1327139

Conclusion 2: They are speaking exclusively about the underlying product and the purposes it was created to serve. They say a good product will benefit the business. But this means they are making a judgement call on what makes a product “good”.

This type of “good for the user” is complicated because it is a combination of objective and subjective consideration of each product individually. It is design in its least reductive form because the creation of something good is the same with or without business interests. A designer shouldn’t use blanket statements agnostic to the design subject. “what is good for the user…” ignores cigarette packet health warnings and poker machine helpline stickers there because of enforced regulation, not because of a business paying designers to create them.

It’s about being aware of the context, intent, and whose interests are being served. It means cutting implied empathy for people if it is bullshit.

If we look at this cartesian plane diagram we can see the blue and green quadrants that corporate product design operates in. The green being where the "good for user, good for business" idea exists, and the yellow representing the area that the idea ignores, dismisses, etc

Hi, welcome to awful.systems' new writing community where we can help anyone who wants to share something more substantial in a blog post or article. I don't think it should matter what the writing is about or if it is fiction, non-fiction, researched academia or an opinion piece. It can help to have some one else look at it.

I am a practising writer who spends a bunch of time obsessing over a post for weeks and then just publishing it out of exhaustion. I've noticed improvements but definitely lacked the kind of feedback that a community like this could offer.

I would suggest that if you do post anything here you specify what kind of attention you would like. For example, are you looking for a critique of your assertions, creative feedback, or an unbiased editorial review?

Discussing your talking points when you just wanted some feedback about the narrative flow can end up having the reverse effect.

Feel free to post things you've already published as well. I don't think the state of the work matters as long as you give context and set expectations.

Thanks, and welcome again!