I'm facing issues scaling my applications and services. I want to add a new frontend service to the cluster for users to interact with the API.

Currently, I'm considering setting up a Flask blueprint to handle calls to engines 2 and 4. Additionally, I'm planning to migrate engine 2 to FastAPI.

The main issue is that the video generator can only handle 12-16 concurrent users. I'm running Gunicorn with 16 workers and a 120-second request timeout because the video generator occasionally hangs.

Is there a way to package multiple backend services into Docker containers and monitor them with an application on engine 1? Would this approach allow more users to access these services simultaneously?

If that is not a reasonable approach, I am open to doing things differently.

Service engine 2 startup command is;

gunicorn -w 16 --timeout 120 -b 127.0.0.1:8000 wsgi:app

My current setup is;

Service Engine 1 (Flask, Sqlite3);

Blog posts, User Authentication, Administration

Services the main frontend website that face the users

Administers the entire cluster including all the other services and frontends.

Service Engine 2 (Flask, Sqlite3);

Video generator and editor, link extractor, and other tools that need server side prossesing

Service Engine 3 (Fastapi, Sqlite3);

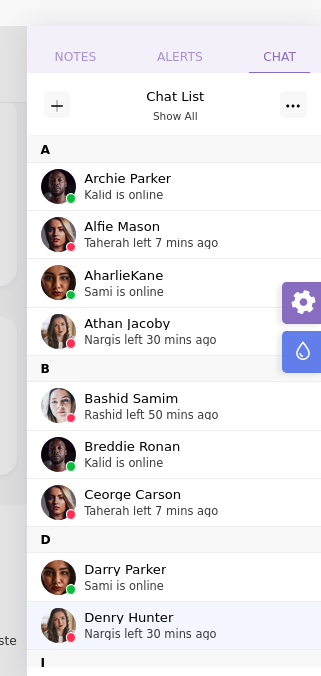

Chat service (rooms, user to user, comments)

Service Engine 4 (Fastapi, Sqlite3);

API, an api that the cluster is based around, scrapers and other automations.

Worker (Node);

Used for language model requests.

I understand.

I simply assumed that I wouldn't be doing anything any other user would be as uploading content underneath 200MB and generating URLs is the entire point of the platform.

All of the video processing & caching would be done on my platform.

Ill reach out directly to Catbox LLC.

EDIT [2024-11-29 13:35]

I reached out to Catbox LLC, However I received no response but I found another provider named gofile.io that allows this kind of thing.

Ill probably start with them.