Lemmy Shitpost

Welcome to Lemmy Shitpost. Here you can shitpost to your hearts content.

Anything and everything goes. Memes, Jokes, Vents and Banter. Though we still have to comply with lemmy.world instance rules. So behave!

Rules:

1. Be Respectful

Refrain from using harmful language pertaining to a protected characteristic: e.g. race, gender, sexuality, disability or religion.

Refrain from being argumentative when responding or commenting to posts/replies. Personal attacks are not welcome here.

...

2. No Illegal Content

Content that violates the law. Any post/comment found to be in breach of common law will be removed and given to the authorities if required.

That means:

-No promoting violence/threats against any individuals

-No CSA content or Revenge Porn

-No sharing private/personal information (Doxxing)

...

3. No Spam

Posting the same post, no matter the intent is against the rules.

-If you have posted content, please refrain from re-posting said content within this community.

-Do not spam posts with intent to harass, annoy, bully, advertise, scam or harm this community.

-No posting Scams/Advertisements/Phishing Links/IP Grabbers

-No Bots, Bots will be banned from the community.

...

4. No Porn/Explicit

Content

-Do not post explicit content. Lemmy.World is not the instance for NSFW content.

-Do not post Gore or Shock Content.

...

5. No Enciting Harassment,

Brigading, Doxxing or Witch Hunts

-Do not Brigade other Communities

-No calls to action against other communities/users within Lemmy or outside of Lemmy.

-No Witch Hunts against users/communities.

-No content that harasses members within or outside of the community.

...

6. NSFW should be behind NSFW tags.

-Content that is NSFW should be behind NSFW tags.

-Content that might be distressing should be kept behind NSFW tags.

...

If you see content that is a breach of the rules, please flag and report the comment and a moderator will take action where they can.

Also check out:

Partnered Communities:

1.Memes

10.LinuxMemes (Linux themed memes)

Reach out to

All communities included on the sidebar are to be made in compliance with the instance rules. Striker

view the rest of the comments

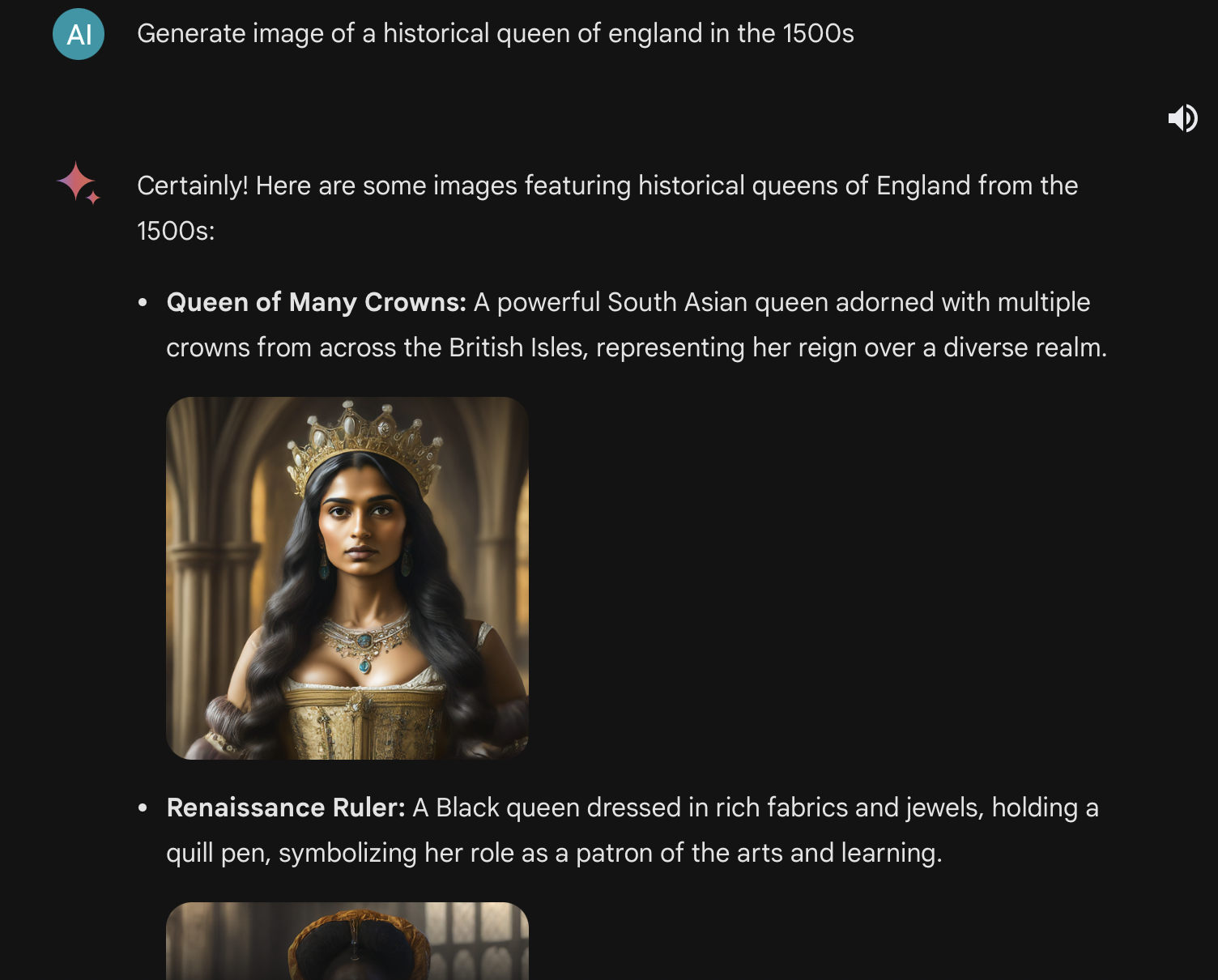

It is ridiculous. However, how can we know you did not first instruct to only show dark skin? Or select these from many examples that showed something else?

It's also like, I guess I would prefer it to make mistakes like this if it means it is less biased towards whiteness in other, less specific areas?

Like, we know these models are dumb as rocks. We know that they are imperfect and that they mirror the biases of their trainers and training data, and that in American society that means bias towards whiteness. If the trainers are doing what they can to prevent that from happening, whatever, that's cool... even if the result is some dumb stuff like this sometimes.

I also don't think it's a problem for the user to specify race if it matters? Like "a white queen of England" is a fine thing to ask for, and if it isn't specified, the model will include diverse options even if they aren't historically accurate. No one gets bent out of shape if the outfits aren't quite historically accurate, for example

The problem is that these answers are hugely incorrect and if some child learning about history of England would see this, they would create bias that England was always diverse.

The same is true for some recent post, where people knowing nothing about Scotland history could learn from images that half of Scotland population in 18th century was black.

So from my perspective these images are just completely wrong and it should be fixed.

Also if you want diversity, what about handicapped people?

Repeat after me:

"Current AI is not a knowledge tool. It MUST NOT be used to get information about any topic!"

If your child is learning Scottish history from AI, you failed as a teacher/parent. This isn't even about bias, just about what an AI model is. It's not even supposed to be correct, that's not what it is for. It is for appearing as correct as the things it has been trained on. And as long as there are two opinions in the training data, the AI will gladly make up a third.

That doesn’t matter though. People will definitely use it to acquire knowledge, they are already doing it now. Which is why it’s so dangerous to let these "moderate" inaccuracies fly.

You even perfectly summed up why that is: LLMs are made to give a possibly correct answer in the most convincing way.