this post was submitted on 21 Oct 2024

525 points (98.2% liked)

Facepalm

2651 readers

4 users here now

founded 1 year ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

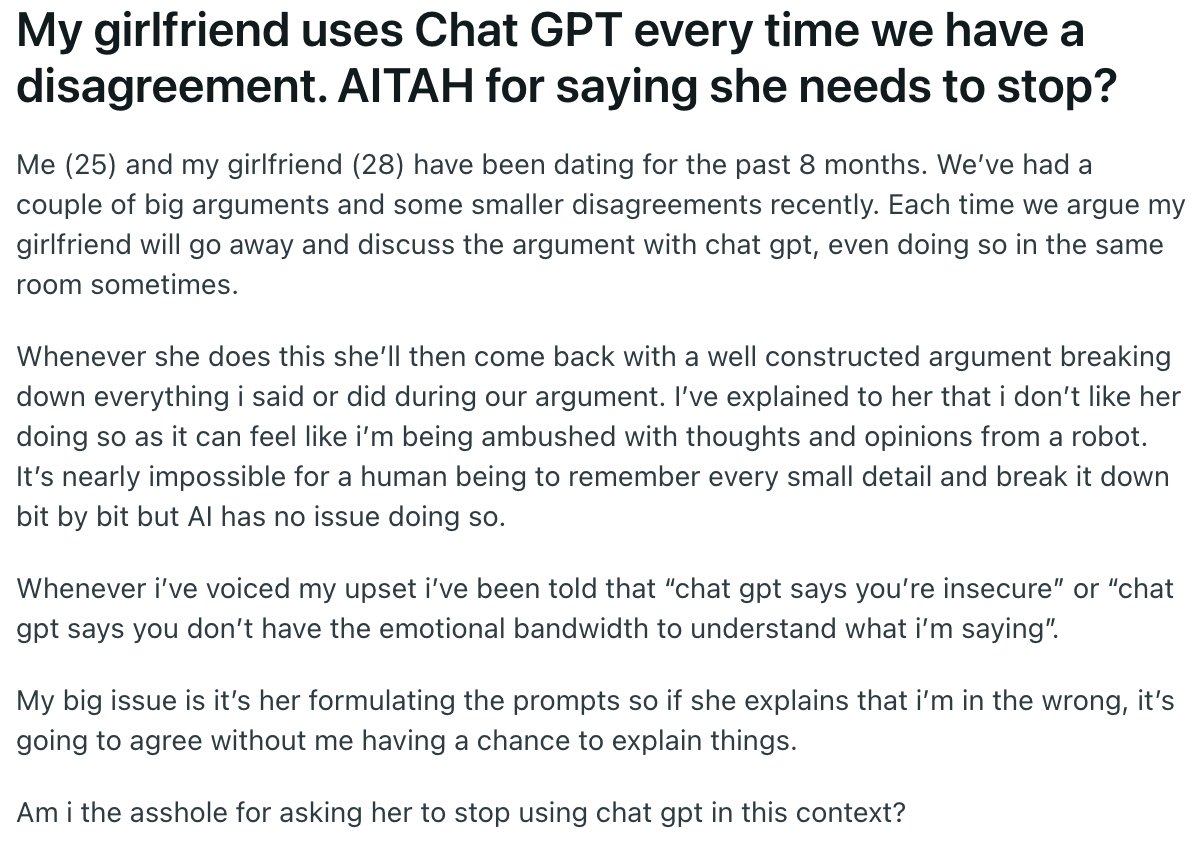

The issue is that chatgpt's logic works backwards - they take the prompts as fact, then find sources to back up the things stated in the prompt. And additionally, chatgpt will frame the argument in a seemingly reasonable and mild tone so as to make the argument appear unbiased and balanced. It's like the entirety of r/relationshipadvice rolled into one useless, billion-dollar spambot

If someone isn't aware of the sycophant nature of chatgpt, it's easy to interpret the response as measured and reliable. When the topic of using chatgpt as relationship advice comes up (it happens concerningly often), I make a point to show that you can get chatgpt to agree with virtually anything you say, even in hypothetical cases where it's absurdly clear that the prompter is in the wrong. At least Google won't tell you that beating your wife is OK