this post was submitted on 21 Oct 2024

366 points (96.4% liked)

Programmer Humor

32557 readers

487 users here now

Post funny things about programming here! (Or just rant about your favourite programming language.)

Rules:

- Posts must be relevant to programming, programmers, or computer science.

- No NSFW content.

- Jokes must be in good taste. No hate speech, bigotry, etc.

founded 5 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

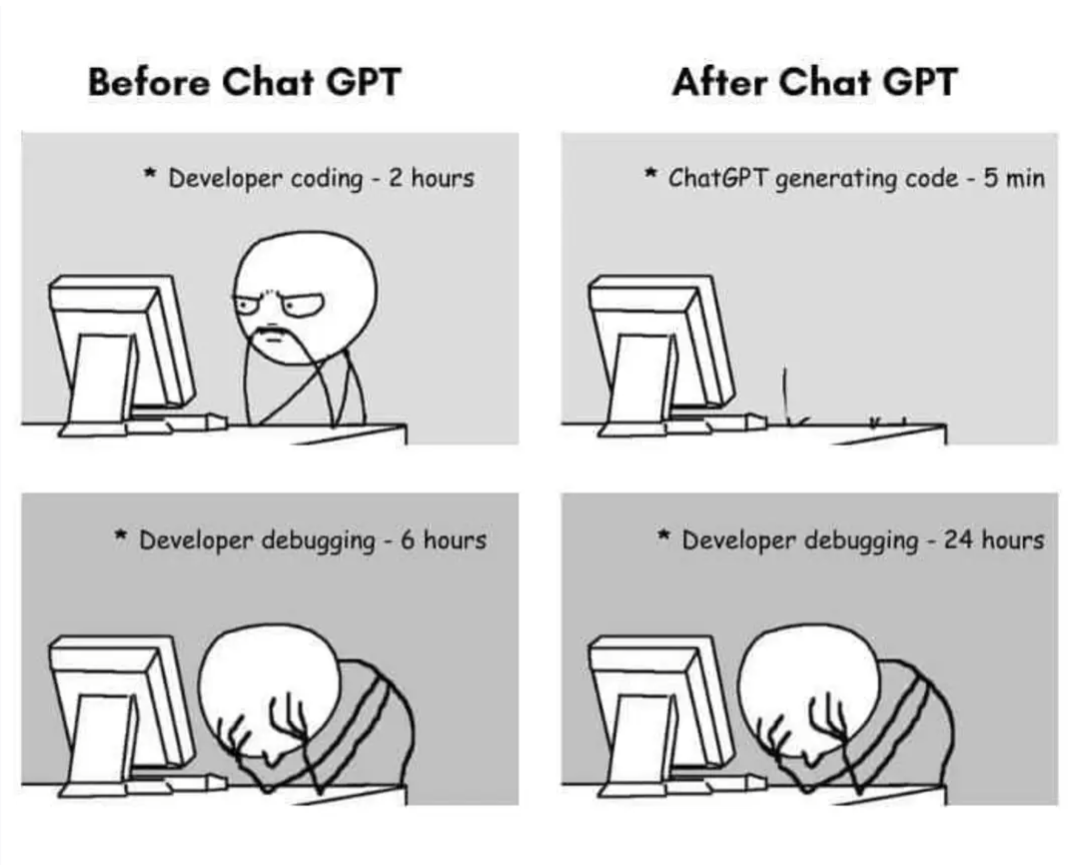

LLMs are statistical word association machines. Or tokens more accurately. So if you tell it to not make mistakes, it'll likely weight the output towards having validation, checks, etc. It might still produce silly output saying no mistakes were made despite having bugs or logic errors. But LLMs are just a tool! So use them for what they're good at and can actually do, not what they themselves claim they can do lol.

I've found it behaves like a stubborn toddler

If you tell it not to do something it will do it more, you need to give it positive instructions not negative