this post was submitted on 08 Jul 2024

843 points (96.8% liked)

Science Memes

10923 readers

2120 users here now

Welcome to c/science_memes @ Mander.xyz!

A place for majestic STEMLORD peacocking, as well as memes about the realities of working in a lab.

Rules

- Don't throw mud. Behave like an intellectual and remember the human.

- Keep it rooted (on topic).

- No spam.

- Infographics welcome, get schooled.

This is a science community. We use the Dawkins definition of meme.

Research Committee

Other Mander Communities

Science and Research

Biology and Life Sciences

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- !reptiles and [email protected]

Physical Sciences

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

- [email protected]

Humanities and Social Sciences

Practical and Applied Sciences

- !exercise-and [email protected]

- [email protected]

- !self [email protected]

- [email protected]

- [email protected]

- [email protected]

Memes

Miscellaneous

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

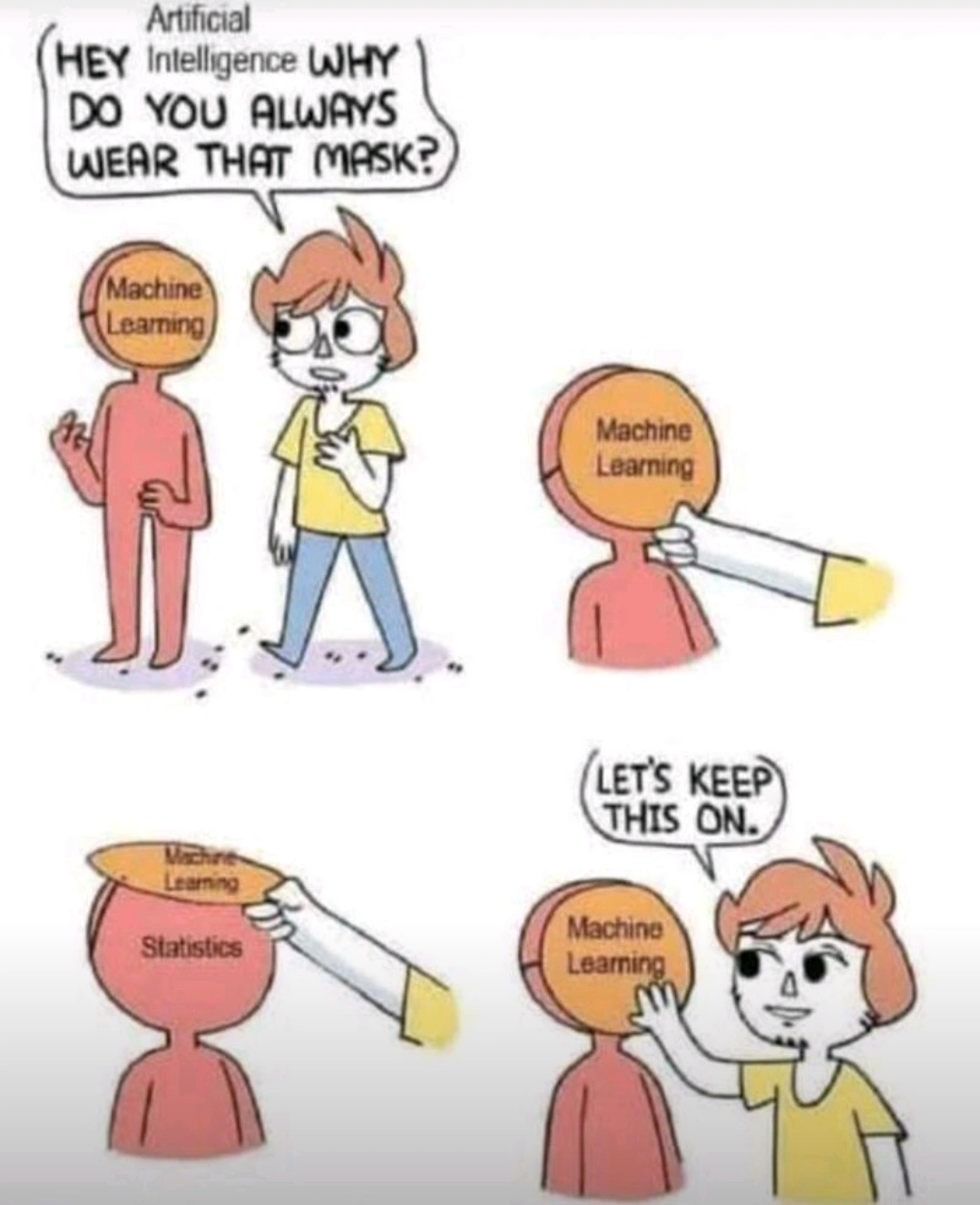

Universal function approximation - neural networks.

Auto-differentiation - algorithmic calculation of partial derivatives (aka gradients)

Backpropagation - when using a neural network (or most ML algorithms actually), you find the difference between model prediction and original labels. And the difference is sent back as gradients (of the loss function)

Parameter dimensionality - the “neurons” in the neural network, ie, the weight matrices.

If thats your argument, its worse than Statistics imo. Atleast statistics have solid theorems and proofs (albeit in very controlled distributions). All DL has right now is a bunch of papers published most often by large tech companies which may/may not work for the problem you’re working on.

Universal function approximation theorem is pretty dope tho. Im not saying ML isn’t interesting, some part of it is but most of it is meh. It’s fine.