Improving Beehaw

BLUF: The operations team at Beehaw has worked to increase site performance and uptime. This includes proactive monitoring to prevent problems from escalating and planning for future likely events.

Problem: Emails only sent to approved users, not denials; denied users can't reapply with the same username

- Solution: Made it so denied users get emails and their usernames freed up to re-use

Details:

-

Disabled Docker postfix container; Lemmy runs on a Linux host that can use postfix itself, without any overhead

-

Modified various postfix components to accept localhost (same system) email traffic only

-

Created two different scripts to:

- Check the Lemmy database once in while, for denied users, send them an email and delete the user from the database

- User can use the same username to register again!

- Send out emails to those users (and also, make the other Lemmy emails look nicer)

-

Sending so many emails from our provider caused the emails to end up in spam!! We had to change a bit of the outgoing flow

- DKIM and SPF setup

- Changed outgoing emails to relay through Mailgun instead of through our VPS

-

Configure Lemmy containers to use the host postfix as mail transport

All is well?

Problem: NO file level backups, only full image snapshots

- Solution: Procured backup storage (Backblaze B2) and setup system backups, tested restoration (successfully)

Details:

-

Requested Funds from Beehaw org to be spent on purchase of cloud based storage, B2 - approved (thank you for the donations)

-

Installed and configured restic encrypted backups of key system files -> b2 'offsite'. This means, even the data from Beehaw that is saved there, is encrypted and no one else can read that information

-

Verified scheduled backups are being run every day, to b2. Important information such as the Lemmy volumes, pictures, configurations for various services, and a database dump are included in such

-

Verified restoration works! Had a small issue with the pictrs migration to object storage (b2). Restored the entire pictrs volume from restic b2 backup successfully. Backups work!

sorry for that downtime, but hey.. it worked

Problem: No metrics/monitoring; what do we focus on to fix?

- Solution: Configured external system monitoring via external SNMP, internal monitoring for services/scripts

Details:

- Using an existing self-hosted Network Monitoring Solution (thus, no cost), established monitoring of Beehaw.org systems via SNMP

- This gives us important metrics such as network bandwidth usage, Memory, and CPU usage tracking down to which process are using the most, parsing system event logs and tracking disk IO/Usage

- Host based monitoring that is configured to perform actions for known error occurrences and attempts to automatically resolve them. Such as Lemmy app; crashing; again

- Alerting for unexpected events or prolonged outages. Spams the crap out of @admin and @Lionir. They love me

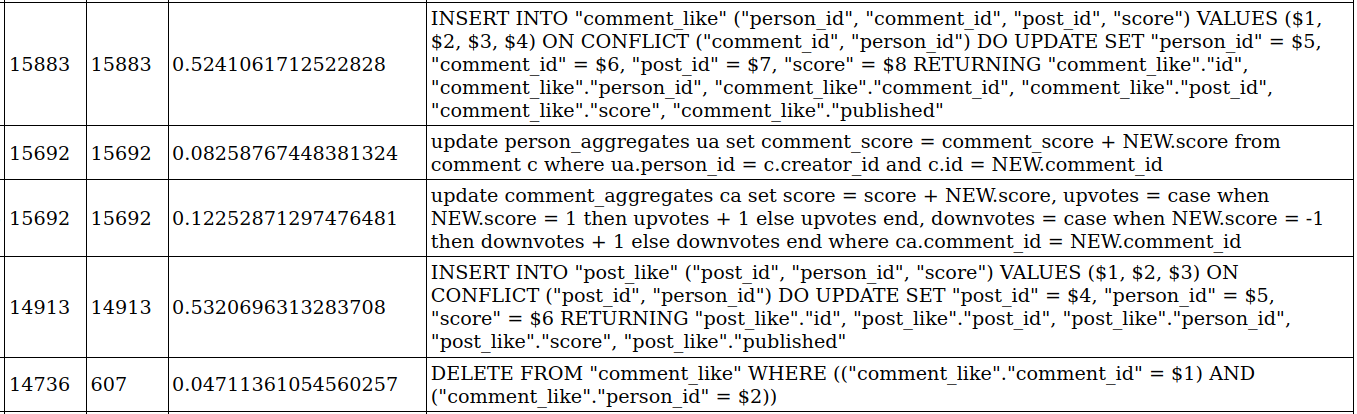

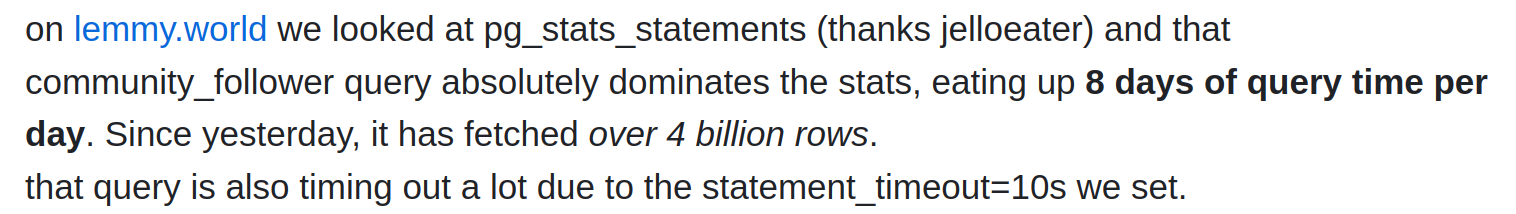

- Database level tracking for 'expensive' queries to know where the time and effort is spent for Lemmy. Helps us to report these issues to the developers and get it fixed.

With this information we've determined the areas to focus on are database performance and storage concerns. We'll be moving our image storage to a CDN if possible to help with bandwidth and storage costs.

Peace of mind, and let the poor admins sleep!

Problem: Lemmy is really slow and more resources for it are REALLY expensive

- Solution: Based on metrics (see above), tuned and configured various applications to improve performance and uptime

Details:

- I know it doesn't seem like it, but really, uptime has been better with a few exceptions

- Modified NGINX (web server) to cache items and load balance between UI instances (currently running 2 lemmy-ui containers)

- Setup frontend varnish cache to decrease backend (Lemmy/DB) load. Save images and other content before hitting the webserver; saves on CPU resources and connections, but no savings to bandwidth cost

- Artificially restricting resource usage (memory, CPU) to prove that Lemmy can run on less hardware without a ton of problems. Need to reduce the cost of running Beehaw

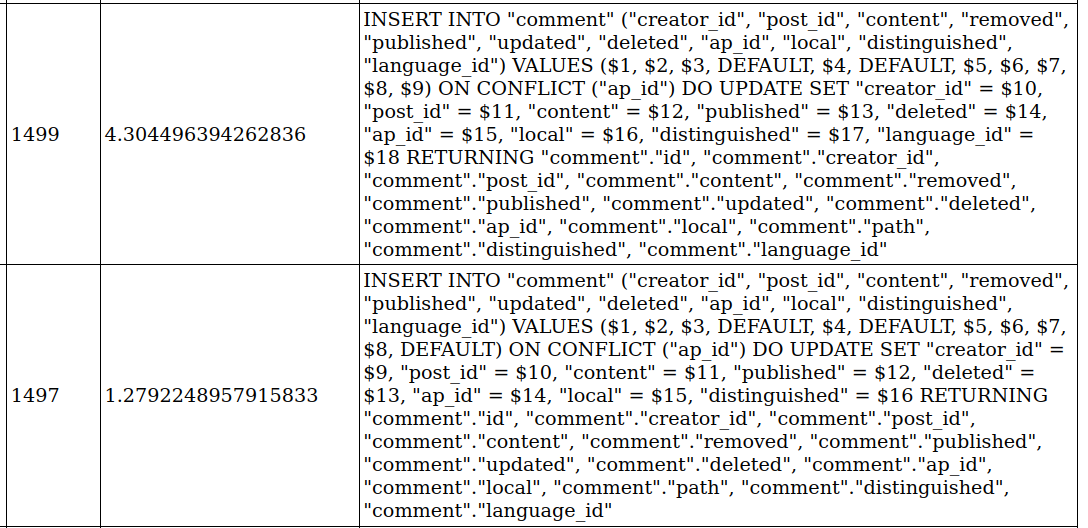

THE DATABASE

This gets it's own section. Look, the largest issue with Lemmy performance is currently the database. We've spent a lot of time attempting to track down why and what it is, and then fixing what we reliably can. However, none of us are rust developers or database admins. We know where Lemmy spends its time in the DB but not why and really don't know how to fix it in the code. If you've complained about why is Lemmy/Beehaw so slow this is it; this is the reason.

So since I can't code rust, what do we do? Fix it where we can! Postgresql server setting tuning and changes. Changed the following items in postgresql to give better performance based on our load and hardware:

huge_pages = on # requires sysctl.conf changes and a system reboot

shared_buffers = 2GB

max_connections = 150

work_mem = 3MB

maintenance_work_mem = 256MB

temp_file_limit = 4GB

min_wal_size = 1GB

max_wal_size = 4GB

effective_cache_size = 3GB

random_page_cost = 1.2

wal_buffers = 16MB

bgwriter_delay = 100ms

bgwriter_lru_maxpages = 150

effective_io_concurrency = 200

max_worker_processes = 4

max_parallel_workers_per_gather = 2

max_parallel_maintenance_workers = 2

max_parallel_workers = 6

synchronous_commit = off

shared_preload_libraries = 'pg_stat_statements'

pg_stat_statements.track = all

Now I'm not saying all of these had an affect, or even a cumulative affect; just the values we've changed. Be sure to use your own system values and not copy the above. The three largest changes I'd say are key to do are synchronous_commit = off, huge_pages = on and work_mem = 3MB. This article may help you understand a few of those changes.

With these changes, the database seems to be working a damn sight better even under heavier loads. There are still a lot of inefficiencies that can be fixed with the Lemmy app for these queries. A user phiresky has made some huge improvements there and we're hoping to see those pulled into main Lemmy on the next full release.

Problem: Lemmy errors aren't helpful and sometimes don't even reach the user (UI)

- Solution: Make our own UI with ~~blackjack and hookers~~ propagation for backend Lemmy errors. Some of these fixes have been merged into Lemmy main codebase

Details

- Yeah, we did that. Including some other UI niceties. Main thing is, you need to pull in the lemmy-ui code make your changes locally, and then use that custom image as your UI for docker

- Made some changes to a custom lemmy-ui image such as handling a few JSON parsed error better, improving feedback given to the user

- Remove and/or move some elements around, change the CSS spacing

- Change the node server to listen to system signals sent to it, such as a graceful docker restart

- Other minor changes to assist caching, changed the container image to Debian based instead of Alpine (reducing crashes)

The end?

No, not by far. But I am about to hit the character limit for Lemmy posts. There have been many other changes and additions to Beehaw operations, these are but a few of the key changes. Sharing with the broader community so those of you also running Lemmy, can see if these changes help you too. Ask questions and I'll discuss and answer what I can; no secret sauce or passwords though; I'm not ChatGPT.

Shout out to @[email protected] , @[email protected] and @[email protected] for continuing to work with me to keep Beehaw running smoothly.

Thanks all you Beeple, for being here and putting up with our growing pains!