PC Gaming

For PC gaming news and discussion. PCGamingWiki

Rules:

- Be Respectful.

- No Spam or Porn.

- No Advertising.

- No Memes.

- No Tech Support.

- No questions about buying/building computers.

- No game suggestions, friend requests, surveys, or begging.

- No Let's Plays, streams, highlight reels/montages, random videos or shorts.

- No off-topic posts/comments, within reason.

- Use the original source, no clickbait titles, no duplicates. (Submissions should be from the original source if possible, unless from paywalled or non-english sources. If the title is clickbait or lacks context you may lightly edit the title.)

amd exists, people.

please put your money where your mouth is please?

Or you know buy an AMD card and quit giving your money to the objectively worse company.

Vote with your wallets. DLSS and Ray Tracing aren't worth it to support this garbage.

Wish more gamers would but that ship has long sailed unfortunately. I mean look at what the majority of gamers tolerate now.

For the people looking to upgrade: always check first the used market in your area. It is quite obvious for now the best thing to do is just try to get 40 series from the drones that must have the 50 series

I've got the feeling that GPU development is plateauing, new flagships are consuming an immense amount of power and the sizes are humongous. I do give DLSS, Local-AI and similar technologies the benefit of doubt but is just not there yet. GPUs should be more efficient and improve in other ways.

And then you pick up a steam deck and play games that were originally meant to play on cards the size of steam deck.

I’ve said for a while that AMD will eventually eclipse all of the competition, simply because their design methodology is so different compared to the others. Intel has historically relied on simply cramming more into the same space. But they’re reaching theoretical limits on how small their designs can be; They’re being limited by things like atom size and the speed of light across the distance of the chip. But AMD has historically used the same dies for as long as possible, and relied on improving their efficiency to get gains instead. They were historically a generation (or even two) behind Intel in terms of pure hardware power, but still managed to compete because they used the chips more efficiently. As AMD also begins to approach those theoretical limits, I think they’ll do a much better job of actually eking out more computing power.

And the same goes for GPUs. With Nvidia recently resorting to the “just make it bigger and give it more power” design philosophy, it likely means they’re also reaching theoretical limitations.

AMD never used chips "more efficiently". They hit gold with the RYZEN design but everything before since Athlon was horrible and more useful as a room heater. And before athlon it was even worse. The k6/k6-2 where funny little buggers extending the life of ziff7 but it lacked a lot of features and dont get me started about their dx4/5 stuff which frequently died in spectacular manners.

Ryzen works because of chiplets and the stacking of the cache. Add some very clever stuff in the pipeline which I don't presume to understand and the magic is complete. AMD is beating intel at it's own game: it's Ticks and tocks are way better and most important : executable. And that is something Intel hasn't been able to really do for several years. It only now seems to be returning.

And lets not forget the usb problems with ryzen 2/3 and the memory compatibility woes of ryzen's past and some say: present. Ryzen is good but its not "clean".

In GPU design AMD clearly does the same but executes worse then nvidia. 9070 cant even match its own predecessor, 7900xtx is again a room heater and is anything but efficient. And lets not talk about what came before. 6xxx series where good enough but troublesome for some and radeon 7 was a complete a shitfest.

Now, with 90 70 AMD once again, for the umpteenth time, promises that the generation after will fix all its woes. That that can compete with Nvidia.

Trouble is, they've been saying that for over a decade.

Intel is the one looking at GPU design differently. The only question is: will they continue or axe the division now gelsinger is gone. which would be monunentally stupid but if we can count on 1 thing then its the horrible shortsightness of corporate America. Especially when wall street is involved. And with intel, wall street is heavily involved. Vultures are circling.

lol this reminds me of whatever that card was back in the 2000's or so, where you could literally make a trace with a pencil to upgrade the version lower to the version higher.

I barely remember this anymore but the downgrade had certain things deactivated. Something like my card had four "pipelines" and the high-end one had eight, so a minor hardware modification could reactivate them. It was risky though, because often imperfections came out of the manufacturing process, and then they would just deactivate the problem areas and turn it into a lower-end version.

After a little while, someone put out drivers that could simulate the modification without physically touching the card. You'd read about softmod and hardmod for the lower-end radeon cards.

I used the softmod and 90% of the time it worked perfectly, but there was definitely an issue where some textures in certain games would have weird artifacting in a checkerboard pattern. If I disabled the softmod the artifacting wouldn't happen.

Yeah, those were the days when cost control was simply to use the same PCB but with just the traces left out. There were also quite a few cards that used the exact same PCB, traces intact, that you could simple flash the next tier card's BIOS and get significant performance bumps.

Did a few of those mods myself back in the day, those were fun times.

Ok now how do I turn my 2070s into a 5090? 😅

Get 500 dollars then use AI to generate the other 3/4 of the money and buy a 5090.

I remember using the pencil trick on my old AMD Duron processor to unlock it. 🤣

Just like I rode my 1080ti for a long time it looks like I'll be running my 3080 for awhile lol.

I hope in a few years when I'm actually ready to upgrade that the GPU market isn't so dire... All signs are pointing to no unfortunately.

Just like I rode my 1080ti for a long time

You only skipped a generation. What are you talking about?

I had the 1080ti well after the 3080 release. I Got a great deal and had recent switched to 1440p so I pulled the trigger on a 3080 not long before the 4000 series cards dropped.

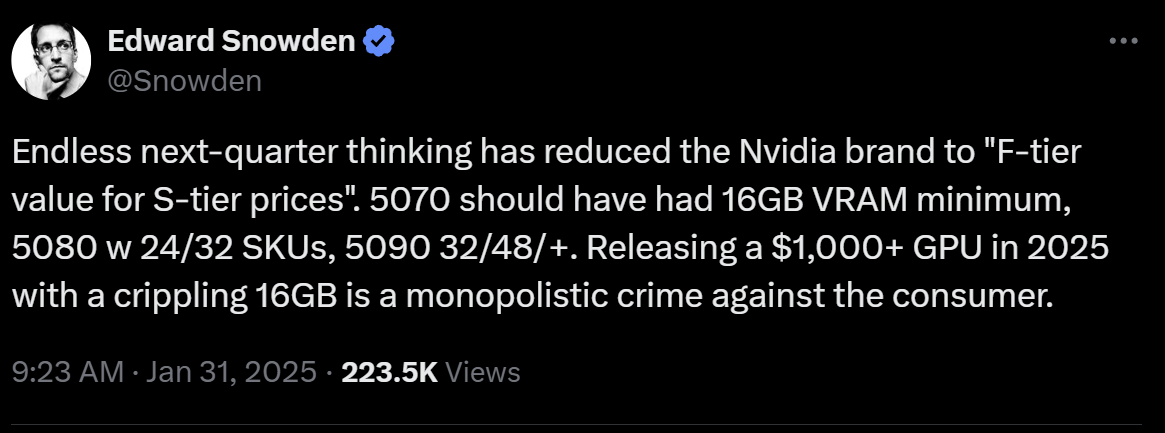

Nvidia is just strait up conning people.

They tried this with the 4080 12 gb... This time they dug in and did it whole hog with all the 5080s.

Shame amd is not going to be competitive

Where's the antitrust regulation?

Trump's America has none. But the FCC is suing public broadcast services. So thats what we get.

Jensen Huang is meeting with Trump to make sure there is none.